torch.nn

Parameters(参数)

class torch.nn.Parameter

Parameters对象是一种会被视为模块参数(module parameter)的Tensor张量。

Parameters类是Tensor 的子类, 不过相对于它的父类,Parameters类有一个很重要的特性就是当其在 Module类中被使用并被当做这个Module类的模块属性的时候,那么这个Parameters对象会被自动地添加到这个Module类的参数列表(list of parameters)之中,同时也就会被添加入此Module类的 parameters()方法所返回的参数迭代器中。而Parameters类的父类Tensor类也可以被用为构建模块的属性,但不会被加入参数列表。这样主要是因为,有时可能需要在模型中存储一些非模型参数的临时状态,比如RNN中的最后一个隐状态。而通过使用非Parameter的Tensor类,可以将这些临时变量注册(register)为模型的属性的同时使其不被加入参数列表。

Parameters:

- data (Tensor) – 参数张量(parameter tensor).

- requires_grad (bool, optional) – 参数是否需要梯度, 默认为

True。更多细节请看 如何将子图踢出反向传播过程。

Containers(容器)

Module(模块)

class torch.nn.Module

模块(Module)是所有神经网络模型的基类。

你创建模型的时候也应该继承这个类哦。

模块(Module)中还可以包含其他的模块,你可以将一个模块赋值成为另一个模块的属性,从而成为这个模块的一个子模块。而通过不断的赋值,你可以将不同的模块组织成一个树结构:

import torch.nn as nn

import torch.nn.functional as F

class Model(nn.Module):

def __init__(self):

super(Model, self).__init__()

self.conv1 = nn.Conv2d(1, 20, 5) # 当前的nn.Conv2d模块就被赋值成为Model模块的一个子模块,成为“树结构”的叶子

self.conv2 = nn.Conv2d(20, 20, 5)

def forward(self, x):

x = F.relu(self.conv1(x))

return F.relu(self.conv2(x))

通过赋值这种方式添加的子模块将会被模型注册(register),而后当调用模块的一些参数转换函数(to())的时候,子模块的参数也会一并转换。

add_module(name, module)

向当前模块添加一个子模块。 此子模块可以作为当前模块的属性被访问到,而属性名就是add_module()函数中的name参数。

add_module()函数参数:

- name (string) – 子模块的名字. 函数调用完成后,可以通过访问当前模块的此字段来访问该子模块。

- parameter (Module) – 要添加到当前模块的子模块。

apply(fn)

apply()函数的主要作用是将 fn 递归地应用于模块的所有子模块(.children()函数的返回值)以及模块自身。此函数的一个经典应用就是初始化模型的所有参数这一过程(同样参见于 torch-nn-init)。

| Parameters: | fn (Module -> None) – 要应用于所有子模型的函数 |

|---|---|

| Returns: | self |

| --- | --- |

| Return type: | Module |

| --- | --- |

例子:

>>> def init_weights(m):

print(m)

if type(m) == nn.Linear:

m.weight.data.fill_(1.0)

print(m.weight)

>>> net = nn.Sequential(nn.Linear(2, 2), nn.Linear(2, 2))

>>> net.apply(init_weights) # 将init_weights()函数应用于模块的所有子模块

Linear(in_features=2, out_features=2, bias=True)

Parameter containing:

tensor([[ 1., 1.],

[ 1., 1.]])

Linear(in_features=2, out_features=2, bias=True)

Parameter containing:

tensor([[ 1., 1.],

[ 1., 1.]])

Sequential(

(0): Linear(in_features=2, out_features=2, bias=True)

(1): Linear(in_features=2, out_features=2, bias=True)

)

Sequential(

(0): Linear(in_features=2, out_features=2, bias=True)

(1): Linear(in_features=2, out_features=2, bias=True)

)

buffers(recurse=True)

返回模块的缓冲区的迭代器

| Parameters: | recurse (bool) – 如果设置为True,产生的缓冲区迭代器会遍历模块自己与所有子模块,否则只会遍历模块的直连的成员。 |

|---|---|

| Yields: | torch.Tensor – 模型缓冲区 |

| --- | --- |

举例:

>>> for buf in model.buffers():

>>> print(type(buf.data), buf.size())

<class 'torch.FloatTensor'> (20L,)

<class 'torch.FloatTensor'> (20L, 1L, 5L, 5L)

children()

返回一个当前所有子模块的迭代器 Returns an iterator over immediate children modules.

| Yields: | Module – 子模块 |

|---|---|

cpu()

将模型的所有参数(parameter)和缓冲区(buffer)都转移到CPU内存中。

| Returns: | self |

|---|---|

| Return type: | Module |

| --- | --- |

cuda(device=None)

将模型的所有参数和缓冲区都转移到CUDA设备内存中。

因为cuda()函数同时会将处理模块中的所有参数并缓存这些参数的对象。所以如果想让模块在GPU上进行优化操作,一定要在构建优化器之前调用模块的cuda()函数。

| Parameters: | device (int, optional) – 如果设备编号被指定,所有的参数都会被拷贝到编号指定设备上 |

|---|---|

| Returns: | self |

| --- | --- |

| Return type: | Module |

| --- | --- |

double()

将所有的浮点数类型的参数(parameters)和缓冲区(buffers)转换为double数据类型。

| Returns: | self |

|---|---|

| Return type: | Module |

| --- | --- |

dump_patches = False

这个字段可以为load_state_dict()提供 BC 支持(BC support实在不懂是什么意思-.-)。 在 state_dict()函数返回的状态字典(state dict)中, 有一个名为_metadata的属性中存储了这个state_dict的版本号。_metadata是一个遵从了状态字典(state dict)的命名规范的关键字字典, 要想了解这个_metadata在加载状态(loading state dict)的时候是怎么用的,可以看一下 _load_from_state_dict部分的文档。

如果新的参数/缓冲区被添加于/移除自这个模块之中时,这个版本号数字会随之发生变化。同时模块的_load_from_state_dict方法会比较版本号的信息并依据此状态词典(state dict)的变化做出一些适当的调整。

eval()

将模块转换为测试模式。

这个函数只对特定的模块类型有效,如 Dropout和BatchNorm等等。如果想了解这些特定模块在训练/测试模式下各自的运作细节,可以看一下这些特殊模块的文档部分。

extra_repr()

为模块设置额外的展示信息(extra representation)。

如果想要打印展示(print)你的模块的一些定制的额外信息,那你应该在你的模块中复现这个函数。单行和多行的字符串都可以被接受。

float()

将所有浮点数类型的参数(parameters)和缓冲区(buffers)转换为float数据类型。

| Returns: | self |

|---|---|

| Return type: | Module |

| --- | --- |

forward(*input)

定义了每次模块被调用之后所进行的计算过程。

应该被Module类的所有子类重写。

Note

尽管模块的前向操作都被定义在这个函数里面,但是当你要进行模块的前向操作的时候,还是要直接调用模块Module 的实例函数,而不是直接调用这个forward()函数。这主要是因为前者会照顾到注册在此模块之上的钩子函数(the registered hooks)的运行,而后者则不会。

half()

将所有的浮点数类型的参数(parameters)和缓冲区(buffers)转换为half数据类型。

| Returns: | self |

|---|---|

| Return type: | Module |

| --- | --- |

load_state_dict(state_dict, strict=True)

将state_dict中的参数(parameters)和缓冲区(buffers)拷贝到模块和其子模块之中。如果strict被设置为True,那么state_dict中的键值(keys)必须与模型的[state_dict()]函数所返回的键值(keys)信息保持完全的一致。

load_state_dict()函数参数:

- state_dict (dict) – 一个包含了参数和持久缓冲区的字典。

- strict (bool, optional) – 是否严格要求

state_dict中的键值(keys)与模型state_dict()函数返回的键值(keys)信息保持完全一致。 默认:True

modules()

返回一个当前模块内所有模块(包括自身)的迭代器。

| Yields: | Module – a module in the network |

|---|---|

Note

注意重复的模块只会被返回一次。比在下面这个例子中,l就只会被返回一次。

例子:

>>> l = nn.Linear(2, 2)

>>> net = nn.Sequential(l, l)

>>> for idx, m in enumerate(net.modules()):

print(idx, '->', m)

0 -> Sequential (

(0): Linear (2 -> 2)

(1): Linear (2 -> 2)

)

1 -> Linear (2 -> 2)

named_buffers(prefix='', recurse=True)

返回一个模块缓冲区的迭代器,每次返回的元素是由缓冲区的名字和缓冲区自身组成的元组。

named_buffers()函数的参数:

- prefix (str) – 要添加在所有缓冲区名字之前的前缀。

- recurse (bool) – 如果设置为True,那样迭代器中不光会返回这个模块自身直连成员的缓冲区,同时也会递归返回其子模块的缓冲区。否则,只返回这个模块直连成员的缓冲区。

| Yields: | (string, torch.Tensor) – 包含了缓冲区的名字和缓冲区自身的元组 |

|---|---|

例子:

>>> for name, buf in self.named_buffers():

>>> if name in ['running_var']:

>>> print(buf.size())

named_children()

返回一个当前模型直连的子模块的迭代器,每次返回的元素是由子模块的名字和子模块自身组成的元组。

| Yields: | (string, Module) – 包含了子模块的名字和子模块自身的元组 |

|---|---|

例子:

>>> for name, module in model.named_children():

>>> if name in ['conv4', 'conv5']:

>>> print(module)

named_modules(memo=None, prefix='')

返回一个当前模块内所有模块(包括自身)的迭代器,每次返回的元素是由模块的名字和模块自身组成的元组。

| Yields: | (string, Module) – 模块名字和模块自身组成的元组 |

|---|---|

Note

重复的模块只会被返回一次。在下面的例子中,l只被返回了一次。

例子:

>>> l = nn.Linear(2, 2)

>>> net = nn.Sequential(l, l)

>>> for idx, m in enumerate(net.named_modules()):

print(idx, '->', m)

0 -> ('', Sequential (

(0): Linear (2 -> 2)

(1): Linear (2 -> 2)

))

1 -> ('0', Linear (2 -> 2))

named_parameters(prefix='', recurse=True)

返回一个当前模块内所有参数的迭代器,每次返回的元素是由参数的名字和参数自身组成的元组。

named_parameters()函数参数:

- prefix (str) – 要在所有参数名字前面添加的前缀。

- recurse (bool) – 如果设置为True,那样迭代器中不光会返回这个模块自身直连成员的参数,同时也会返回其子模块的参数。否则,只返回这个模块直连成员的参数。

| Yields: | (string, Parameter) – 参数名字和参数自身组成的元组 |

|---|---|

例子:

>>> for name, param in self.named_parameters():

>>> if name in ['bias']:

>>> print(param.size())

parameters(recurse=True)

返回一个遍历模块所有参数的迭代器。 parameters()函数一个经典的应用就是实践中经常将此函数的返回值传入优化器。

| Parameters: | recurse (bool) – 如果设置为True,那样迭代器中不光会返回这个模块自身直连成员的参数,同时也会递归返回其子模块的参数。否则,只返回这个模块直连成员的参数。 |

|---|---|

| Yields: | Parameter – 模块参数 |

| --- | --- |

例子:

>>> for param in model.parameters():

>>> print(type(param.data), param.size())

<class 'torch.FloatTensor'> (20L,)

<class 'torch.FloatTensor'> (20L, 1L, 5L, 5L)

register_backward_hook(hook)

在模块上注册一个挂载在反向操作之后的钩子函数。(挂载在backward之后这个点上的钩子函数)

对于每次输入,当模块关于此次输入的反向梯度的计算过程完成,该钩子函数都会被调用一次。此钩子函数需要遵从以下函数签名:

hook(module, grad_input, grad_output) -> Tensor or None

如果模块的输入或输出是多重的(multiple inputs or outputs),那 grad_input 和 grad_output 应当是元组数据。 钩子函数不能对输入的参数grad_input 和 grad_output进行任何更改,但是可以选择性地根据输入的参数返回一个新的梯度回去,而这个新的梯度在后续的计算中会替换掉grad_input。

| Returns: | 一个句柄(handle),这个handle的特点就是通过调用handle.remove()函数就可以将这个添加于模块之上的钩子移除掉。 |

|---|---|

| Return type: | torch.utils.hooks.RemovableHandle |

| --- | --- |

Warning

对于一些具有很多复杂操作的Module,当前的hook实现版本还不能达到完全理想的效果。举个例子,有些错误的情况下,函数的输入参数grad_input 和 grad_output中可能只是真正的输入和输出变量的一个子集。对于此类的Module,你应该使用[torch.Tensor.register_hook()]直接将钩子挂载到某个特定的输入输出的变量上,而不是当前的模块。

register_buffer(name, tensor)

往模块上添加一个持久缓冲区。

这个函数的经常会被用于向模块添加不会被认为是模块参数(model parameter)的缓冲区。举个栗子,BatchNorm的running_mean就不是一个参数,但却属于持久状态。

所添加的缓冲区可以通过给定的名字(name参数)以访问模块的属性的方式进行访问。

register_buffer()函数的参数:

- name (string) – 要添加的缓冲区的名字。所添加的缓冲区可以通过此名字以访问模块的属性的方式进行访问。

- tensor (Tensor) – 需要注册到模块上的缓冲区。

例子:

>>> self.register_buffer('running_mean', torch.zeros(num_features))

register_forward_hook(hook)

在模块上注册一个挂载在前向操作之后的钩子函数。(挂载在forward操作结束之后这个点)

此钩子函数在每次模块的 forward()函数运行结束产生output之后就会被触发。此钩子函数需要遵从以下函数签名:

hook(module, input, output) -> None

此钩子函数不能进行会修改 input 和 output 这两个参数的操作。

| Returns: | 一个句柄(handle),这个handle的特点就是通过调用handle.remove()函数就可以将这个添加于模块之上的钩子移除掉。 |

|---|---|

| Return type: | torch.utils.hooks.RemovableHandle |

| --- | --- |

register_forward_pre_hook(hook)

在模块上注册一个挂载在前向操作之前的钩子函数。(挂载在forward操作开始之前这个点)

此钩子函数在每次模块的 forward()函数运行开始之前会被触发。此钩子函数需要遵从以下函数签名:

The hook will be called every time before forward() is invoked. It should have the following signature:

hook(module, input) -> None

此钩子函数不能进行会修改 input 这个参数的操作。

| Returns: | 一个句柄(handle),这个handle的特点就是通过调用handle.remove()函数就可以将这个添加于模块之上的钩子移除掉。 |

|---|---|

| Return type: | torch.utils.hooks.RemovableHandle |

| --- | --- |

register_parameter(name, param)

向模块添加一个参数(parameter)。

所添加的参数(parameter)可以通过给定的名字(name参数)以访问模块的属性的方式进行访问。

register_parameter()函数的参数:

- name (string) – 所添加的参数的名字. 所添加的参数(parameter)可以通过此名字以访问模块的属性的方式进行访问

- parameter (Parameter) – 要添加到模块之上的参数。

state_dict(destination=None, prefix='', keep_vars=False)

返回一个包含了模块当前所有状态(state)的字典(dictionary)。

所有的参数和持久缓冲区都被囊括在其中。字典的键值就是响应的参数和缓冲区的名字(name)。

| Returns: | 一个包含了模块当前所有状态的字典 |

|---|---|

| Return type: | dict |

| --- | --- |

例子:

>>> module.state_dict().keys()

['bias', 'weight']

to(*args, **kwargs)

移动 并且/或者(and/or)转换所有的参数和缓冲区。

这个函数可以这样调用:

to(device=None, dtype=None, non_blocking=False)

to(dtype, non_blocking=False)

to(tensor, non_blocking=False)

此函数的函数签名跟torch.Tensor.to()函数的函数签名很相似,只不过这个函数dtype参数只接受浮点数类型的dtype,如float, double, half (floating point desired dtype s)。同时,这个方法只会将浮点数类型的参数和缓冲区(the floating point parameters and buffers)转化为dtype(如果输入参数中给定的话)的数据类型。而对于整数类型的参数和缓冲区(the integral parameters and buffers),即便输入参数中给定了dtype,也不会进行转换操作,而如果给定了 device参数,移动操作则会正常进行。当non_blocking参数被设置为True之后,此函数会尽可能地相对于 host 进行异步的 转换/移动 操作,比如,将存储在固定内存(pinned memory)上的CPU Tensors移动到CUDA设备上这一过程既是如此。

例子在下面。

Note

这个方法对模块的修改都是in-place操作。

to()函数的参数:

- device (

torch.device) – 想要将这个模块中的参数和缓冲区转移到的设备。 - dtype (

torch.dtype) – 想要将这个模块中浮点数的参数和缓冲区转化为的浮点数数据类型。 - tensor (torch.Tensor) – 一个Tensor,如果被指定,其dtype和device信息,将分别起到上面两个参数的作用,也就是说,这个模块的浮点数的参数和缓冲区的数据类型将会被转化为这个Tensor的dtype类型,同时被转移到此Tensor所处的设备device上去。

| Returns: | self |

|---|---|

| Return type: | Module |

| --- | --- |

例子:

>>> linear = nn.Linear(2, 2)

>>> linear.weight

Parameter containing:

tensor([[ 0.1913, -0.3420],

[-0.5113, -0.2325]])

>>> linear.to(torch.double)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1913, -0.3420],

[-0.5113, -0.2325]], dtype=torch.float64)

>>> gpu1 = torch.device("cuda:1")

>>> linear.to(gpu1, dtype=torch.half, non_blocking=True)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1914, -0.3420],

[-0.5112, -0.2324]], dtype=torch.float16, device='cuda:1')

>>> cpu = torch.device("cpu")

>>> linear.to(cpu)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1914, -0.3420],

[-0.5112, -0.2324]], dtype=torch.float16)

train(mode=True)

将模块转换成训练模式。

这个函数只对特定的模块类型有效,如 Dropout和BatchNorm等等。如果想了解这些特定模块在训练/测试模式下各自的运作细节,可以看一下这些特殊模块的文档部分。

| Returns: | self |

|---|---|

| Return type: | Module |

| --- | --- |

type(dst_type)

将所有的参数和缓冲区转化为 dst_type的数据类型。

| Parameters: | dst_type (type or string) – 要转化的数据类型 |

|---|---|

| Returns: | self |

| --- | --- |

| Return type: | Module |

| --- | --- |

zero_grad()

讲模块所有参数的梯度设置为0。

Sequential

class torch.nn.Sequential(*args)

一种顺序容器。传入Sequential构造器中的模块会被按照他们传入的顺序依次添加到Sequential之上。相应的,一个由模块组成的顺序词典也可以被传入到Sequential的构造器中。

为了方便大家理解,举个简单的例子:

# 构建Sequential的例子

model = nn.Sequential(

nn.Conv2d(1,20,5),

nn.ReLU(),

nn.Conv2d(20,64,5),

nn.ReLU()

)

# 利用OrderedDict构建Sequential的例子

model = nn.Sequential(OrderedDict([

('conv1', nn.Conv2d(1,20,5)),

('relu1', nn.ReLU()),

('conv2', nn.Conv2d(20,64,5)),

('relu2', nn.ReLU())

]))

ModuleList (模块列表)

class torch.nn.ModuleList(modules=None)

ModuleList的作用是将一堆模块(module)存储在一个列表之中。

ModuleList 可以按一般的python列表的索引方式进行索引,但ModuleList中的模块都已被正确注册,并且对所有的Module method可见。

| Parameters: | modules (iterable__, optional) – 一个要添加到ModuleList中的由模块组成的可迭代结构(an iterable of modules) |

|---|---|

例子:

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

self.linears = nn.ModuleList([nn.Linear(10, 10) for i in range(10)])

def forward(self, x):

# ModuleList可以被当作一个迭代器,同时也可以使用index索引

for i, l in enumerate(self.linears):

x = self.linears[i // 2](x) + l(x)

return x

append(module)

将一个模块添加到ModuleList的末尾,与python list的append()一致。

| Parameters: | module (nn.Module) – 要添加的模块 |

|---|---|

extend(modules)

将一个由模块组成的可迭代结构添加到ModuleList的末尾,与python list的extend()一致。

| Parameters: | modules (iterable) – 要添加到ModuleList末尾的由模块组成的可迭代结构 |

|---|---|

insert(index, module)

将给定的module插入到ModuleList的index位置。

insert()函数的参数:

ModuleDict (模块词典)

class torch.nn.ModuleDict(modules=None)

ModuleDict的作用是将一堆模块(module)存储在一个词典之中。

ModuleDict 可以按一般的python词典的索引方式进行索引,但ModuleDict中的模块都已被正确注册,并且对所有的Module method可见。

| Parameters: | modules (iterable__, optional) – 一个由(string: module)映射组成的映射集合(词典)或者 一个由(string, module)键/值对组成的可迭代结构 |

|---|---|

Example:

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

self.choices = nn.ModuleDict({

'conv': nn.Conv2d(10, 10, 3),

'pool': nn.MaxPool2d(3)

})

self.activations = nn.ModuleDict([

['lrelu', nn.LeakyReLU()],

['prelu', nn.PReLU()]

])

def forward(self, x, choice, act):

x = self.choices[choice](x)

x = self.activations[act](x)

return x

clear()

移除ModuleDict中所有的元素。

items()

返回一个由ModuleDict中的键/值对组成的可迭代结构。

keys()

返回一个由ModuleDict中的键组成的可迭代结构。

pop(key)

将key这个键从ModuleDict中删除,并将其对应的模块返回。

| Parameters: | key (string) – 要从ModuleDict中弹出的键 |

|---|---|

update(modules)

通过传入的映射或者由键/值对组成的可迭代结构对当前的ModuleDict进行更新,如果传入对象与当前ModuleDict中存在键重复,当前ModuleDict中这些重复的键所对应的值将被覆盖。

| Parameters: | modules (iterable) – 一个由(string: Module)映射组成的映射集合(词典)或者 一个由(string: Module)键/值对组成的可迭代结构 |

|---|---|

values()

返回一个由ModuleDict中的值组成的可迭代结构。

ParameterList (参数列表)

class torch.nn.ParameterList(parameters=None)

ParameterList的作用是将一堆参数(parameter)存储到一个列表中。

ParameterList 可以按一般的python列表的索引方式进行索引,但ParameterList中的参数(parameter)都已被正确注册,并且对所有的Module method可见。

| Parameters: | parameters (iterable__, optional) – 要添加到ParameterList之上的由parameter组成的可迭代结构 |

|---|---|

例子:

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

self.params = nn.ParameterList([nn.Parameter(torch.randn(10, 10)) for i in range(10)])

def forward(self, x):

# ParameterList可以被当作一个迭代器,同时也可以使用index索引

for i, p in enumerate(self.params):

x = self.params[i // 2].mm(x) + p.mm(x)

return x

append(parameter)

将一个parameter添加到ParameterList的末尾。

| Parameters: | parameter (nn.Parameter) – 要添加的参数 |

|---|---|

extend(parameters)

将一个由parameter组成的Python可迭代结构添加到ParameterList的末尾。

| Parameters: | parameters (iterable) – 要添加到ParameterList的末尾的由parameter组成的Python可迭代结构 |

|---|---|

ParameterDict (参数词典)

class torch.nn.ParameterDict(parameters=None)

ParameterDict的作用是将一堆参数(Parameter)存储在一个词典之中。

ParameterDict 可以按一般的python词典的索引方式进行索引,但ParameterDictt中的参数都已被正确注册,并且对所有的Module method可见。

| Parameters: | parameters (iterable__, optional) – 一个由(string:Parameter)映射组成的映射集合(词典)或者 一个由(string, Parameter)键/值对组成的可迭代结构 |

|---|---|

例子:

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

self.params = nn.ParameterDict({

'left': nn.Parameter(torch.randn(5, 10)),

'right': nn.Parameter(torch.randn(5, 10))

})

def forward(self, x, choice):

x = self.params[choice].mm(x)

return x

clear()

移除ParameterDict中所有的元素。

items()

返回一个由ParameterDict中的键/值对组成的可迭代结构。

keys()

返回一个由 ParameterDict中的键组成的可迭代结构。

pop(key)

将key这个键从ParameterDict中删除,并将其对应的模块返回。

| Parameters: | key (string) – 要从ParameterDict中弹出的键 |

|---|---|

update(parameters)

通过传入的映射或者由键/值对组成的可迭代结构对当前的ParameterDict进行更新,如果传入对象与当前ParameterDict中存在键重复,当前ParameterDict中这些重复的键所对应的值将被覆盖。

| Parameters: | parameters (iterable) – modules (iterable) – 一个由(string: Parameter)映射组成的映射集合(词典)或者 一个由(string: Parameter)键/值对组成的可迭代结构 |

|---|---|

values()

返回一个由ParameterDict中的值组成的可迭代结构。

Convolution layers (卷积层)

Conv1d

class torch.nn.Conv1d(in_channels, out_channels, kernel_size, stride=1, padding=0, dilation=1, groups=1, bias=True)

利用指定大小的一维卷积核对输入的多通道一维输入信号进行一维卷积操作的卷积层。

在最简单的情况下,对于输入大小为 ,输出大小为

,输出大小为 的一维卷积层,其卷积计算过程可以如下表述:

的一维卷积层,其卷积计算过程可以如下表述:

这里的 符号实际上是一个互相关(cross-correlation) 操作符(大家可以自己查一下互相关和真卷积的区别,互相关因为实现起来很简单,所以一般的深度学习框架都是用互相关操作取代真卷积),

符号实际上是一个互相关(cross-correlation) 操作符(大家可以自己查一下互相关和真卷积的区别,互相关因为实现起来很简单,所以一般的深度学习框架都是用互相关操作取代真卷积),  is a batch size,

is a batch size,  代表通道的数量,

代表通道的数量,  代表信号序列的长度。

代表信号序列的长度。

-

stride参数控制了互相关操作(伪卷积)的步长,参数的数据类型一般是单个数字或者一个只有一个元素的元组。 -

padding参数控制了要在一维卷积核的输入信号的各维度各边上要补齐0的层数。 -

dilation参数控制了卷积核中各元素之间的距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。 -

groups控制了输入输出之间的连接(connections)的数量。in_channels和out_channels必须能被groups整除。举个栗子,> * 当 groups=1, 此Conv1d层会使用一个卷积层进行所有输入到输出的卷积操作。

> * 当 groups=2, 此时Conv1d层会产生两个并列的卷积层。同时,输入通道被分为两半,两个卷积层分别处理一半的输入通道,同时各自产生一半的输出通道。最后这两个卷积层的输出会被concatenated一起,作为此Conv1d层的输出。

> * 当 groups=

in_channels, 每个输入通道都会被单独的一组卷积层处理,这个组的大小是

Note

取决于你卷积核的大小,有些时候输入数据中某些列(最后几列)可能不会参与计算(比如列数整除卷积核大小有余数,而又没有padding,那最后的余数列一般不会参与卷积计算),这主要是因为pytorch中的互相关操作cross-correlation是保证计算正确的操作(valid operation), 而不是满操作(full operation)。所以实际操作中,还是要亲尽量选择好合适的padding参数哦。

Note

当groups == in_channels 并且 out_channels == K * in_channels(其中K是正整数)的时候,这个操作也被称为深度卷积。

举个创建深度卷积层的例子,对于一个大小为  的输入,要构建一个深度乘数为

的输入,要构建一个深度乘数为K的深度卷积层,可以通过以下参数来创建: 。

。

Note

当程序的运行环境是使用了CuDNN的CUDA环境的时候,一些非确定性的算法(nondeterministic algorithm)可能会被采用以提高整个计算的性能。如果不想使用这些非确定性的算法,你可以通过设置torch.backends.cudnn.deterministic = True来让整个计算过程保持确定性(可能会损失一定的计算性能)。对于后端(background),你可以看一下这一部分Reproducibility了解其相关信息。

Conv1d的参数:

- in_channels (int) – 输入通道个数

- out_channels (int) – 输出通道个数

- kernel_size (int or tuple) – 卷积核大小

- stride (int or tuple, optional) – 卷积操作的步长。 默认: 1

- padding (int or tuple, optional) – 输入数据各维度各边上要补齐0的层数。 默认: 0

- dilation (int or tuple, optional) – 卷积核各元素之间的距离。 默认: 1

- groups (int, optional) – 输入通道与输出通道之间相互隔离的连接的个数。 默认:1

- bias (bool, optional) – 如果被置为

True,向输出增加一个偏差量,此偏差是可学习参数。 默认:True

Shape:

-

输入:

-

输出:

其中

其中

| 内部Variables: |

- weight (Tensor) – Conv1d模块中的一个大小为(out_channels, in_channels, kernel_size)的权重张量,这些权重可训练学习(learnable)。这些权重的初始值的采样空间是

, 其中

, 其中 。

。 - bias (Tensor) – 模块的偏差项,大小为(out_channels),可训练学习。如果构造Conv1d时构造函数中的

bias被置为True,那么这些权重的初始值的采样空间是 , 其中

, 其中  。

。

例子:

>>> m = nn.Conv1d(16, 33, 3, stride=2)

>>> input = torch.randn(20, 16, 50)

>>> output = m(input)

Conv2d

class torch.nn.Conv2d(in_channels, out_channels, kernel_size, stride=1, padding=0, dilation=1, groups=1, bias=True)

利用指定大小的二维卷积核对输入的多通道二维输入信号进行二维卷积操作的卷积层。

在最简单的情况下,对于输入大小为 ,输出大小为

,输出大小为 的二维维卷积层,其卷积计算过程可以如下表述:

的二维维卷积层,其卷积计算过程可以如下表述:

这里的 符号实际上是一个二维互相关(cross-correlation) 操作符(大家可以自己查一下互相关和真卷积的区别,互相关因为实现起来很简单,所以一般的深度学习框架都是用互相关操作取代真卷积),

符号实际上是一个二维互相关(cross-correlation) 操作符(大家可以自己查一下互相关和真卷积的区别,互相关因为实现起来很简单,所以一般的深度学习框架都是用互相关操作取代真卷积),  is a batch size,

is a batch size,  代表通道的数量,

代表通道的数量,  是输入的二维数据的像素高度,

是输入的二维数据的像素高度, 是输入的二维数据的像素宽度。

是输入的二维数据的像素宽度。

-

stride参数控制了互相关操作(伪卷积)的步长,参数的数据类型一般是单个数字或者一个只有一个元素的元组。 -

padding参数控制了要在二维卷积核的输入信号的各维度各边上要补齐0的层数。 -

dilation参数控制了卷积核中各元素之间的距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。 -

groups控制了输入输出之间的连接(connections)的数量。in_channels和out_channels必须能被groups整除。举个栗子,> * 当 groups=1, 此Conv1d层会使用一个卷积层进行所有输入到输出的卷积操作。

> * 当 groups=2, 此时Conv1d层会产生两个并列的卷积层。同时,输入通道被分为两半,两个卷积层分别处理一半的输入通道,同时各自产生一半的输出通道。最后这两个卷积层的输出会被concatenated一起,作为此Conv1d层的输出。

> * 当 groups=

in_channels, 每个输入通道都会被单独的一组卷积层处理,这个组的大小是

kernel_size, stride, padding, dilation这几个参数均支持一下输入形式:

- 一个

int数字 – 二维数据的高和宽这两个维度都会采用这一个数字。- 一个由两个int数字组成的

tuple– 这种情况下,二维数据的高这一维度会采用元组中的第一个int数字,宽这一维度会采用第二个int数字。

Note

取决于你卷积核的大小,有些时候输入数据中某些列(最后几列)可能不会参与计算(比如列数整除卷积核大小有余数,而又没有padding,那最后的余数列一般不会参与卷积计算),这主要是因为pytorch中的互相关操作cross-correlation是保证计算正确的操作(valid operation), 而不是满操作(full operation)。所以实际操作中,还是要亲尽量选择好合适的padding参数哦。

Note

当groups == in_channels 并且 out_channels == K * in_channels(其中K是正整数)的时候,这个操作也被称为深度卷积。

换句话说,对于一个大小为 的输入,要构建一个深度乘数为

的输入,要构建一个深度乘数为K的深度卷积层,可以通过以下参数来创建: 。

。

Note

当程序的运行环境是使用了CuDNN的CUDA环境的时候,一些非确定性的算法(nondeterministic algorithm)可能会被采用以提高整个计算的性能。如果不想使用这些非确定性的算法,你可以通过设置torch.backends.cudnn.deterministic = True来让整个计算过程保持确定性(可能会损失一定的计算性能)。对于后端(background),你可以看一下这一部分Reproducibility了解其相关信息。

Conv2d的参数:

- in_channels (int) – 输入通道个数

- out_channels (int) – 输出通道个数

- kernel_size (int or tuple) – 卷积核大小

- stride (int or tuple, optional) –卷积操作的步长。 默认: 1

- padding (int or tuple, optional) – 输入数据各维度各边上要补齐0的层数。 默认: 0

- dilation (int or tuple, optional) –卷积核各元素之间的距离。 默认: 1

- groups (int, optional) – 输入通道与输出通道之间相互隔离的连接的个数。 默认:1

- bias (bool, optional) – 如果被置为

True,向输出增加一个偏差量,此偏差是可学习参数。 默认:True

Shape:

-

输入:

-

输出:

其中

其中

| 内部Variables: |

- weight (Tensor) – Conv2d模块中的一个大小为 (out_channels, in_channels, kernel_size[0], kernel_size[1])的权重张量,这些权重可训练学习(learnable)。这些权重的初始值的采样空间是

, 其中

, 其中 。

。 - bias (Tensor) – 块的偏差项,大小为(out_channels),可训练学习。如果构造Conv2d时构造函数中的

bias被置为True,那么这些权重的初始值的采样空间是 ,其中

,其中 。

。

例子:

>>> # With square kernels and equal stride

>>> m = nn.Conv2d(16, 33, 3, stride=2)

>>> # non-square kernels and unequal stride and with padding

>>> m = nn.Conv2d(16, 33, (3, 5), stride=(2, 1), padding=(4, 2))

>>> # non-square kernels and unequal stride and with padding and dilation

>>> m = nn.Conv2d(16, 33, (3, 5), stride=(2, 1), padding=(4, 2), dilation=(3, 1))

>>> input = torch.randn(20, 16, 50, 100)

>>> output = m(input)

Conv3d

class torch.nn.Conv3d(in_channels, out_channels, kernel_size, stride=1, padding=0, dilation=1, groups=1, bias=True)

利用指定大小的三维卷积核对输入的多通道三维输入信号进行三维卷积操作的卷积层。

最简单的情况下,对于输入大小为 ,输出大小为

,输出大小为 的三维卷积层,其卷积计算过程可以如下表述:

的三维卷积层,其卷积计算过程可以如下表述:

这里的  符号实际上是一个三维互相关 cross-correlation 操作符。

符号实际上是一个三维互相关 cross-correlation 操作符。

-

stride数控制了互相关操作(伪卷积)的步长。 -

padding参数控制了要在三维卷积核的输入信号的各维度各边上要补齐0的层数。 -

dilation参数控制了卷积核中各元素之间的距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。 -

groups控制了输入输出之间的连接(connections)的数量。in_channels和out_channels必须能被groups整除。举个栗子,

> * 当 groups=1, 此Conv3d层会使用一个卷积层进行对所有输入到输出的卷积操作。

> * 当 groups=2, 此时Conv3d层会产生两个并列的卷积层。同时,输入通道被分为两半,两个卷积层分别处理一半的输入通道,同时各自产生一半的输出通道。最后这两个卷积层的输出会被concatenated一起,作为此Conv3d层的输出。

> * 当 groups= in_channels, 每个输入通道都会被单独的一组卷积层处理,这个组的大小是  .

.

kernel_size, stride, padding, dilation这几个参数均支持一下输入形式:

- 一个

int数字 – 三维维数据的深度,高和宽这三个维度都会采用这一个数字。- 一个由三个int数字组成的

tuple– 这种情况下,三维数据的深度这一维度会采用元组中的第一个int数字,高这一维度会采用元组中的第二个int数字,宽这一维度会采用第三个int数字。

Note

取决于你卷积核的大小,有些时候输入数据中某些列(最后几列)可能不会参与计算(比如列数整除卷积核大小有余数,而又没有padding,那最后的余数列一般不会参与卷积计算),这主要是因为pytorch中的互相关操作cross-correlation是保证计算正确的操作(valid operation), 而不是满操作(full operation)。所以实际操作中,还是要亲尽量选择好合适的padding参数哦。

Note

当groups == in_channels 并且 out_channels == K * in_channels(其中K是正整数)的时候,这个操作也被称为深度卷积。

换句话说,对于一个大小为  的输入,要构建一个深度乘数为

的输入,要构建一个深度乘数为K的深度卷积层,可以通过以下参数来创建: 。

。

Note

当程序的运行环境是使用了CuDNN的CUDA环境的时候,一些非确定性的算法(nondeterministic algorithm)可能会被采用以提高整个计算的性能。如果不想使用这些非确定性的算法,你可以通过设置torch.backends.cudnn.deterministic = True来让整个计算过程保持确定性(可能会损失一定的计算性能)。对于后端(background),你可以看一下这一部分Reproducibility了解其相关信息。

Parameters:

- in_channels (int) – 输入通道的个数

- out_channels (int) – 卷积操作输出通道的个数

- kernel_size (int or tuple) – 卷积核大小

- stride (int or tuple, optional) – 卷积操作的步长。 默认: 1

- padding (int or tuple, optional) – 输入数据各维度各边上要补齐0的层数。 默认: 0

- dilation (int or tuple, optional) – 卷积核各元素之间的距离。 默认: 1

- groups (int, optional) – 输入通道与输出通道之间相互隔离的连接的个数。 默认:1

- bias (bool, optional) – 如果被置为

True,向输出增加一个偏差量,此偏差是可学习参数。 默认:True

Shape:

-

输入:

-

输出:

where

where

| 内部Variables: |

- weight (Tensor) – Conv3d模块中的一个大小为 (out_channels, in_channels, kernel_size[0], kernel_size[1], kernel_size[2]) 的权重张量,这些权重可训练学习(learnable)。这些权重的初始值的采样空间是

,其中

,其中 。

。 - bias (Tensor) – 模块的偏差项,大小为(out_channels),可训练学习。如果构造Conv1d时构造函数中的

bias被置为True,那么这些权重的初始值的采样空间是 ,其中

,其中  。

。

例子:

>>> # With square kernels and equal stride

>>> m = nn.Conv3d(16, 33, 3, stride=2)

>>> # non-square kernels and unequal stride and with padding

>>> m = nn.Conv3d(16, 33, (3, 5, 2), stride=(2, 1, 1), padding=(4, 2, 0))

>>> input = torch.randn(20, 16, 10, 50, 100)

>>> output = m(input)

ConvTranspose1d

class torch.nn.ConvTranspose1d(in_channels, out_channels, kernel_size, stride=1, padding=0, output_padding=0, groups=1, bias=True, dilation=1)

利用指定大小的一维转置卷积核对输入的多通道一维输入信号进行转置卷积(当然此卷积也是互相关操作,cross-correlation)操作的模块。

该模块可以看作是Conv1d相对于其输入的梯度(the gradient of Conv1d with respect to its input, 直译), 转置卷积又被称为小数步长卷积或是反卷积(尽管这不是一个真正意义上的反卷积)。

-

stride控制了转置卷积操作的步长 -

padding控制了要在输入的各维度的各边上补齐0的层数,与Conv1d不同的地方,此padding参数与实际补齐0的层数的关系为层数 = kernel_size - 1 - padding,详情请见下面的note。 -

output_padding控制了转置卷积操作输出的各维度的长度增量,但注意这个参数不是说要往转置卷积的输出上pad 0,而是直接控制转置卷积的输出大小为根据此参数pad后的大小。更多的详情请见下面的note。 -

dilation控制了卷积核中各点之间的空间距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。 -

groups控制了输入输出之间的连接(connections)的数量。in_channels和out_channels必须能被groups整除。举个栗子,> * 当 groups=1, 此Conv1d层会使用一个卷积层进行所有输入到输出的卷积操作。

> * 当 groups=2, 此时Conv1d层会产生两个并列的卷积层。同时,输入通道被分为两半,两个卷积层分别处理一半的输入通道,同时各自产生一半的输出通道。最后这两个卷积层的输出会被concatenated一起,作为此Conv1d层的输出。

> * 当 groups=

in_channels, 每个输入通道都会被单独的一组卷积层处理,这个组的大小是 。

。

Note

取决于你卷积核的大小,有些时候输入数据中某些列(最后几列)可能不会参与计算(比如列数整除卷积核大小有余数,而又没有padding,那最后的余数列一般不会参与卷积计算),这主要是因为pytorch中的互相关操作cross-correlation是保证计算正确的操作(valid operation), 而不是满操作(full operation)。所以实际操作中,还是要亲尽量选择好合适的padding参数哦。

Note

padding 参数控制了要在输入的各维度各边上补齐0的层数,与在Conv1d中不同的是,在转置卷积操作过程中,此padding参数与实际补齐0的层数的关系为层数 = kernel_size - 1 - padding, 这样设置的主要原因是当使用相同的参数构建Conv1d 和ConvTranspose1d模块的时候,这种设置能够实现两个模块有正好相反的输入输出的大小,即Conv1d的输出大小是其对应的ConvTranspose1d模块的输入大小,而ConvTranspose1d的输出大小又恰好是其对应的Conv1d模块的输入大小。然而,当stride > 1的时候,Conv1d 的一个输出大小可能会对应多个输入大小,上一个note中就详细的介绍了这种情况,这样的情况下要保持前面提到两种模块的输入输出保持反向一致,那就要用到 output_padding参数了,这个参数可以增加转置卷积输出的某一维度的大小,以此来达到前面提到的同参数构建的Conv1d 和ConvTranspose1d模块的输入输出方向一致。 但注意这个参数不是说要往转置卷积的输出上pad 0,而是直接控制转置卷积的输出各维度的大小为根据此参数pad后的大小。

Note

当程序的运行环境是使用了CuDNN的CUDA环境的时候,一些非确定性的算法(nondeterministic algorithm)可能会被采用以提高整个计算的性能。如果不想使用这些非确定性的算法,你可以通过设置torch.backends.cudnn.deterministic = True来让整个计算过程保持确定性(可能会损失一定的计算性能)。对于后端(background),你可以看一下这一部分Reproducibility了解其相关信息。

Parameters:

- in_channels (int) – 输入通道的个数

- out_channels (int) – 卷积操作输出通道的个数

- kernel_size (int or tuple) – 卷积核大小

- stride (int or tuple, optional) – 卷积操作的步长。 默认: 1

- padding (int or tuple, optional) –

kernel_size - 1 - padding层 0 会被补齐到输入数据的各边上。 默认: 0 - output_padding (int or tuple, optional) – 输出的各维度要增加的大小。默认:0

- groups (int, optional) – 输入通道与输出通道之间相互隔离的连接的个数。 默认:1

- bias (bool, optional) – 如果被置为

True,向输出增加一个偏差量,此偏差是可学习参数。 默认:True - dilation (int or tuple, optional) – 卷积核各元素之间的距离。 默认: 1

Shape:

-

输入:

-

输出:

其中,

其中,

| Variables: |

- weight (Tensor) – 模块中的一个大小为 (in_channels, out_channels, kernel_size[0])的权重张量,这些权重可训练学习(learnable)。这些权重的初始值的采样空间是

,其中

,其中  。

。 - bias (Tensor) – 模块的偏差项,大小为 (out_channels), 如果构造函数中的

bias被置为True,那么这些权重的初始值的采样空间是 ,其中

,其中  。

。

ConvTranspose2d

class torch.nn.ConvTranspose2d(in_channels, out_channels, kernel_size, stride=1, padding=0, output_padding=0, groups=1, bias=True, dilation=1)

利用指定大小的二维转置卷积核对输入的多通道二维输入信号进行转置卷积(当然此卷积也是互相关操作,cross-correlation)操作的模块。

该模块可以看作是Conv2d相对于其输入的梯度(the gradient of Conv2d with respect to its input, 直译), 转置卷积又被称为小数步长卷积或是反卷积(尽管这不是一个真正意义上的反卷积)。

-

stride控制了转置卷积操作的步长 -

padding控制了要在输入的各维度的各边上补齐0的层数,与Conv1d不同的地方,此padding参数与实际补齐0的层数的关系为层数 = kernel_size - 1 - padding,详情请见下面的note。 -

output_padding控制了转置卷积操作输出的各维度的长度增量,但注意这个参数不是说要往转置卷积的输出上pad 0,而是直接控制转置卷积的输出大小为根据此参数pad后的大小。更多的详情请见下面的note。 -

dilation控制了卷积核中各点之间的空间距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。 -

groups控制了输入输出之间的连接(connections)的数量。in_channels和out_channels必须能被groups整除。举个栗子,> * 当 groups=1, 此Conv1d层会使用一个卷积层进行所有输入到输出的卷积操作。

> * 当 groups=2, 此时Conv1d层会产生两个并列的卷积层。同时,输入通道被分为两半,两个卷积层分别处理一半的输入通道,同时各自产生一半的输出通道。最后这两个卷积层的输出会被concatenated一起,作为此Conv1d层的输出。

> * 当 groups=

in_channels, 每个输入通道都会被单独的一组卷积层处理,这个组的大小是 。

。

kernel_size, stride, padding, output_padding 这几个参数均支持一下输入形式:

- 一个

int数字 – 二维维数据的高和宽这两个维度都会采用这一个数字。- 一个由两个int数字组成的

tuple– 这种情况下,二维数据的高这一维度会采用元组中的第一个int数字,宽这一维度会采用第二个int数字。

Note

取决于你卷积核的大小,有些时候输入数据中某些列(最后几列)可能不会参与计算(比如列数整除卷积核大小有余数,而又没有padding,那最后的余数列一般不会参与卷积计算),这主要是因为pytorch中的互相关操作cross-correlation是保证计算正确的操作(valid operation), 而不是满操作(full operation)。所以实际操作中,还是要亲尽量选择好合适的padding参数哦。

Note

padding 参数控制了要在输入的各维度各边上补齐0的层数,与在Conv1d中不同的是,在转置卷积操作过程中,此padding参数与实际补齐0的层数的关系为层数 = kernel_size - 1 - padding, 这样设置的主要原因是当使用相同的参数构建Conv2d 和ConvTranspose2d模块的时候,这种设置能够实现两个模块有正好相反的输入输出的大小,即Conv2d的输出大小是其对应的ConvTranspose2d模块的输入大小,而ConvTranspose2d的输出大小又恰好是其对应的Conv2d模块的输入大小。然而,当stride > 1的时候,Conv2d 的一个输出大小可能会对应多个输入大小,上一个note中就详细的介绍了这种情况,这样的情况下要保持前面提到两种模块的输入输出保持反向一致,那就要用到 output_padding参数了,这个参数可以增加转置卷积输出的某一维度的大小,以此来达到前面提到的同参数构建的Conv2d 和ConvTranspose2d模块的输入输出方向一致。 但注意这个参数不是说要往转置卷积的输出上pad 0,而是直接控制转置卷积的输出各维度的大小为根据此参数pad后的大小。

Note

当程序的运行环境是使用了CuDNN的CUDA环境的时候,一些非确定性的算法(nondeterministic algorithm)可能会被采用以提高整个计算的性能。如果不想使用这些非确定性的算法,你可以通过设置torch.backends.cudnn.deterministic = True来让整个计算过程保持确定性(可能会损失一定的计算性能)。对于后端(background),你可以看一下这一部分Reproducibility了解其相关信息。

Parameters:

- in_channels (int) – 输入通道的个数

- out_channels (int) – 卷积操作输出通道的个数

- kernel_size (int or tuple) – 卷积核大小

- stride (int or tuple, optional) – 卷积操作的步长。 默认: 1

- padding (int or tuple, optional) –

kernel_size - 1 - padding层 0 会被补齐到输入数据的各边上。 默认: 0 - output_padding (int or tuple, optional) – 输出的各维度要增加的大小。默认:0

- groups (int, optional) – 输入通道与输出通道之间相互隔离的连接的个数。 默认:1

- bias (bool, optional) – 如果被置为

True,向输出增加一个偏差量,此偏差是可学习参数。 默认:True - dilation (int or tuple, optional) – 卷积核各元素之间的距离。 默认: 1

Shape:

- 输入:

- 输出:

其中

其中

| Variables: |

- weight (Tensor) – 模块中的一个大小为 (in_channels, out_channels, kernel_size[0], kernel_size[1])的权重张量,这些权重可训练学习(learnable)。这些权重的初始值的采样空间是

,其中

,其中  。

。 - bias (Tensor) – 模块的偏差项,大小为 (out_channels), 如果构造函数中的

bias被置为True,那么这些权重的初始值的采样空间是 ,其中

,其中  。

。

例子:

>>> # With square kernels and equal stride

>>> m = nn.ConvTranspose2d(16, 33, 3, stride=2)

>>> # non-square kernels and unequal stride and with padding

>>> m = nn.ConvTranspose2d(16, 33, (3, 5), stride=(2, 1), padding=(4, 2))

>>> input = torch.randn(20, 16, 50, 100)

>>> output = m(input)

>>> # exact output size can be also specified as an argument

>>> input = torch.randn(1, 16, 12, 12)

>>> downsample = nn.Conv2d(16, 16, 3, stride=2, padding=1)

>>> upsample = nn.ConvTranspose2d(16, 16, 3, stride=2, padding=1)

>>> h = downsample(input)

>>> h.size()

torch.Size([1, 16, 6, 6])

>>> output = upsample(h, output_size=input.size())

>>> output.size()

torch.Size([1, 16, 12, 12])

ConvTranspose3d

class torch.nn.ConvTranspose3d(in_channels, out_channels, kernel_size, stride=1, padding=0, output_padding=0, groups=1, bias=True, dilation=1)

利用指定大小的三维转置卷积核对输入的多通道三维输入信号进行转置卷积(当然此卷积也是互相关操作,cross-correlation)操作的模块。转置卷积的操作本质是将各通道输入与卷积核做乘法,然后返回各通道与此卷积核乘积结果之和(卷积的定义)。

该模块可以看作是Conv3d相对于其输入的梯度(the gradient of Conv3d with respect to its input, 直译), 转置卷积又被称为小数步长卷积或是反卷积(尽管这不是一个真正意义上的反卷积)。

-

stride控制了转置卷积操作的步长 -

padding控制了要在输入的各维度的各边上补齐0的层数,与Conv1d不同的地方,此padding参数与实际补齐0的层数的关系为层数 = kernel_size - 1 - padding,详情请见下面的note。 -

output_padding控制了转置卷积操作输出的各维度的长度增量,但注意这个参数不是说要往转置卷积的输出上pad 0,而是直接控制转置卷积的输出大小为根据此参数pad后的大小。更多的详情请见下面的note。 -

dilation控制了卷积核中各点之间的空间距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。 -

groups控制了输入输出之间的连接(connections)的数量。in_channels和out_channels必须能被groups整除。举个栗子,> * 当 groups=1, 此Conv1d层会使用一个卷积层进行所有输入到输出的卷积操作。

> * 当 groups=2, 此时Conv1d层会产生两个并列的卷积层。同时,输入通道被分为两半,两个卷积层分别处理一半的输入通道,同时各自产生一半的输出通道。最后这两个卷积层的输出会被concatenated一起,作为此Conv1d层的输出。

> * 当 groups=

in_channels, 每个输入通道都会被单独的一组卷积层处理,这个组的大小是 。

。

kernel_size, stride, padding, output_padding 这几个参数均支持一下输入形式:

- 一个

int数字 – 三维维数据的深度,高和宽这两个维度都会采用这一个数字。- 一个由三个int数字组成的

tuple– 这种情况下,三维数据的深度这一维度会采用元组中的第一个int数字,高这一维度会采用元组中的第二个int数字,宽这一维度会采用第三个int数字。

Note

取决于你卷积核的大小,有些时候输入数据中某些列(最后几列)可能不会参与计算(比如列数整除卷积核大小有余数,而又没有padding,那最后的余数列一般不会参与卷积计算),这主要是因为pytorch中的互相关操作cross-correlation是保证计算正确的操作(valid operation), 而不是满操作(full operation)。所以实际操作中,还是要亲尽量选择好合适的padding参数哦。

Note

padding 参数控制了要在输入的各维度各边上补齐0的层数,与在Conv3d中不同的是,在转置卷积操作过程中,此padding参数与实际补齐0的层数的关系为层数 = kernel_size - 1 - padding, 这样设置的主要原因是当使用相同的参数构建Conv3d 和ConvTranspose3d模块的时候,这种设置能够实现两个模块有正好相反的输入输出的大小,即Conv3d的输出大小是其对应的ConvTranspose3d模块的输入大小,而ConvTranspose3d的输出大小又恰好是其对应的Conv3d模块的输入大小。然而,当stride > 1的时候,Conv3d 的一个输出大小可能会对应多个输入大小,上一个note中就详细的介绍了这种情况,这样的情况下要保持前面提到两种模块的输入输出保持反向一致,那就要用到 output_padding参数了,这个参数可以增加转置卷积输出的某一维度的大小,以此来达到前面提到的同参数构建的Conv3d 和ConvTranspose3d模块的输入输出方向一致。 但注意这个参数不是说要往转置卷积的输出上pad 0,而是直接控制转置卷积的输出各维度的大小为根据此参数pad后的大小。

Note

当程序的运行环境是使用了CuDNN的CUDA环境的时候,一些非确定性的算法(nondeterministic algorithm)可能会被采用以提高整个计算的性能。如果不想使用这些非确定性的算法,你可以通过设置torch.backends.cudnn.deterministic = True来让整个计算过程保持确定性(可能会损失一定的计算性能)。对于后端(background),你可以看一下这一部分Reproducibility了解其相关信息。

Parameters:

- in_channels (int) – 输入通道的个数

- out_channels (int) – 卷积操作输出通道的个数

- kernel_size (int or tuple) – 卷积核大小

- stride (int or tuple, optional) – 卷积操作的步长。 默认: 1

- padding (int or tuple, optional) –

kernel_size - 1 - padding层 0 会被补齐到输入数据的各边上。 默认: 0 - output_padding (int or tuple, optional) – 输出的各维度要增加的大小。默认:0

- groups (int, optional) – 输入通道与输出通道之间相互隔离的连接的个数。 默认:1

- bias (bool, optional) – 如果被置为

True,向输出增加一个偏差量,此偏差是可学习参数。 默认:True - dilation (int or tuple, optional) – 卷积核各元素之间的距离。 默认: 1

Shape:

- 输入:

- 输出:

其中

其中

| Variables: |

- weight (Tensor) – 模块中的一个大小为 (in_channels, out_channels, kernel_size[0], kernel_size[1], kernel_size[2])的权重张量,这些权重可训练学习(learnable)。这些权重的初始值的采样空间是

,其中

,其中  。

。 - bias (Tensor) – 模块的偏差项,大小为 (out_channels), 如果构造函数中的

bias被置为True,那么这些权重的初始值的采样空间是 ,其中

,其中  。

。

例子:

>>> # With square kernels and equal stride

>>> m = nn.ConvTranspose3d(16, 33, 3, stride=2)

>>> # non-square kernels and unequal stride and with padding

>>> m = nn.ConvTranspose3d(16, 33, (3, 5, 2), stride=(2, 1, 1), padding=(0, 4, 2))

>>> input = torch.randn(20, 16, 10, 50, 100)

>>> output = m(input)

Unfold

class torch.nn.Unfold(kernel_size, dilation=1, padding=0, stride=1)

将一个batch的输入张量展开成由多个滑动局部块组成的形式。(im2col的扩展模块,起到基本类似im2col的作用)

以一个大小为 的批次化(batched)输入张量为例,其中

的批次化(batched)输入张量为例,其中 是batch的大小,

是batch的大小, 是通道数量,

是通道数量, 代表了任意空间维度。那Unfold这个操作在此张量上的操作就是,将这个张量展开成由多个

代表了任意空间维度。那Unfold这个操作在此张量上的操作就是,将这个张量展开成由多个kernel_size大小的滑动块组成的大小为 的三维张量,其中

的三维张量,其中 是每个块中数的个数(每个块有

是每个块中数的个数(每个块有 个空间位置,每个空间位置存储一个通道大小为

个空间位置,每个空间位置存储一个通道大小为 的向量),

的向量), 是块的个数:

是块的个数:

(这张图有问题啊,编辑整理的时候注意修正一下)

(这张图有问题啊,编辑整理的时候注意修正一下)

其中  是由上面例子中的

是由上面例子中的input各空间维度组成的, 遍历了各个空间维度。

遍历了各个空间维度。

因此,索引Fold操作的output的最后一个维度等价于索引某一个block,而索引操作的返回值是这个索引到的block中的所有值。

padding, stride 和 dilation 参数指明了滑动块的相关性质。

stride控制了滑动块的步长。padding控制了在变换之前要向input的各维度各边上补齐的0的层数。dilation控制了卷积核中各点之间的空间距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。

Parameters:

- kernel_size (int or tuple) – 滑动块的大小

- stride (int or tuple, optional) – 滑动块在输入各维度上的步长。默认: 1

- padding (int or tuple, optional) – 在输入各维度各边上补齐0的层数。

-

dilation (int or tuple, optional) – 控制了各元素之间的距离(没有指明元素具体指的是谁的元素,猜测是输出的)。默认:1

-

如果

kernel_size,dilation,padding或者stride的值是一个int,或是一个长度为1的int元组,在相关操作的时候各个空间维度上都会使用这同一个值。 - 如果输出向量有两个空间维度,那么此Fold操作有时又被称为

im2col。

Note

Fold在执行类col2im的操作的时候,主要是是通过集成此im(输出张量)分裂出所有对应位置的col(输入的滑动块)来复原原im。而Unfold则是通过从输入张量中不断拷贝数值到相应的block中来生成由滑动块组成的输出张量。所以,如果滑动块之间如果有数值重叠,那这些滑动块之间并不是互逆的。

Warning

目前,只有四维张量(比如批次化的图像张量)支持这个操作。

Shape:

- 输入:

- 输出:

Examples:

>>> unfold = nn.Unfold(kernel_size=(2, 3))

>>> input = torch.randn(2, 5, 3, 4)

>>> output = unfold(input)

>>> # each patch contains 30 values (2x3=6 vectors, each of 5 channels)

>>> # 4 blocks (2x3 kernels) in total in the 3x4 input

>>> output.size()

torch.Size([2, 30, 4])

>>> # Convolution is equivalent with Unfold + Matrix Multiplication + Fold (or view to output shape)

>>> inp = torch.randn(1, 3, 10, 12)

>>> w = torch.randn(2, 3, 4, 5)

>>> inp_unf = torch.nn.functional.unfold(inp, (4, 5))

>>> out_unf = inp_unf.transpose(1, 2).matmul(w.view(w.size(0), -1).t()).transpose(1, 2)

>>> out = torch.nn.functional.fold(out_unf, (7, 8), (1, 1))

>>> # or equivalently (and avoiding a copy),

>>> # out = out_unf.view(1, 2, 7, 8)

>>> (torch.nn.functional.conv2d(inp, w) - out).abs().max()

tensor(1.9073e-06)

Fold

class torch.nn.Fold(output_size, kernel_size, dilation=1, padding=0, stride=1)

将由滑动局部块组成的数组集合为一个大张量。(类col2im)

考虑一个包含了很多个滑动局部块的输入张量,比如,一批图像分割块(patches of images)的集合,大小为 ,其中

,其中 是batch大小,

是batch大小,  是一个块中的数值个数(每个块有

是一个块中的数值个数(每个块有 个空间位置,每个空间位置存储一个通道大小为

个空间位置,每个空间位置存储一个通道大小为 的向量),

的向量), 是滑动块的个数。(这些大小参数严格遵循了

是滑动块的个数。(这些大小参数严格遵循了Unfold操作的输出向量的大小规定。)Fold操作通过求和重叠值的方式来将这些局部块集合为一个大小为 的

的output张量。与 Unfold类似,这些参数必须满足:

其中 遍历了各个空间维度。

遍历了各个空间维度。

output_size描述了要生成的output的各空间维度的大小。有时,同样数量的滑动块,可能会产生多种input的形状,比如,当stride > 0的时候,这时候,设置output_size参数就会显得极为重要。

padding, stride 和 dilation 参数指明了滑动块的相关性质。

stride控制了滑动块的步长。padding控制了在变换之前要向input的各维度各边上补齐的0的层数。dilation控制了卷积核中各点之间的空间距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。

Parameters:

-

output_size (int or tuple) – 输出向量的各空间维度的大小 (i.e.,

input.sizes()[2:]) - stride (int or tuple, optional) – 滑动块在输入各维度上的步长。默认: 1

- padding (int or tuple, optional) – 在输入各维度各边上补齐0的层数。

-

dilation (int or tuple, optional) – 控制了各元素之间的距离(没有指明元素具体指的是谁的元素,猜测是输出的)。默认:1

-

如果

output_size,kernel_size,dilation,padding或者stride是一个int或者长度为1的int元组,在相关操作的时候各个空间维度上都会使用这同一个值。 - 如果此输出向量的空间维度数为2,那么此Fold操作有时又被称为

col2im。

Note

Fold在执行类col2im的操作的时候,主要是是通过集成此im(输出张量)分裂出所有对应位置的col(输入的滑动块)来复原原im。而Unfold则是通过从输入张量中不断拷贝数值到相应的block中来生成由滑动块组成的输出张量。所以,如果滑动块之间如果有数值重叠,那这些滑动块之间并不是互逆的。

Warning

目前,只有四维张量(比如批次化的图像张量)支持这个操作。

Shape:

- 输入:

- 输出:

举例:

>>> fold = nn.Fold(output_size=(4, 5), kernel_size=(2, 2))

>>> input = torch.randn(1, 3 * 2 * 2, 1)

>>> output = fold(input)

>>> output.size()

卷积层部分Fold 与 Unfold 是1.0新增的内容,猜测其主要目的是开放col2im和im2col这两个通过矩阵乘法实现卷积操作的前序接口,要好好理解这部分可能要了解一下现在主流框架通过大矩阵乘法来实现卷积操作这一通用做法了,这一篇文章就介绍的很好[Implementing convolution as a matrix multiplication](https://buptldy.github.io/2016/10/01/2016-10-01-im2col/),这一段如果感觉我的直译晦涩难懂,那我深感抱歉并建议看一下英文原版,虽然我觉得英文原版介绍的也是晦涩难懂

池化层(Pooling layers)

MaxPool1d

class torch.nn.MaxPool1d(kernel_size, stride=None, padding=0, dilation=1, return_indices=False, ceil_mode=False)

对输入的多通道信号执行一维最大池化操作。

最简单的情况下,对于输入大小为  ,输出大小为

,输出大小为 的池化操作,此池化过程可表述如下:

的池化操作,此池化过程可表述如下:

padding 参数控制了要在输入信号的各维度各边上要补齐0的层数。

dilation 参数控制了池化核中各元素之间的距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。

Parameters:

- kernel_size – 最大池化操作的滑动窗大小

- stride – 滑动窗的步长,默认值是

kernel_size - padding – 要在输入信号的各维度各边上要补齐0的层数

- dilation – 滑动窗中各元素之间的距离

- return_indices – 如果此参数被设置为

True, 那么此池化层在返回输出信号的同时还会返回一连串滑动窗最大值的索引位置,即每个滑动窗的最大值位置信息。这些信息可以在后面的上采样torch.nn.MaxUnpool1d中被用到。 - ceil_mode – 如果此参数被设置为True,计算输出信号大小的时候,会使用向上取整,代替默认的向下取整的操作

Shape:

-

输入:

-

输出:

其中

其中

例子:

>>> # pool of size=3, stride=2

>>> m = nn.MaxPool1d(3, stride=2)

>>> input = torch.randn(20, 16, 50)

>>> output = m(input)

MaxPool2d

class torch.nn.MaxPool2d(kernel_size, stride=None, padding=0, dilation=1, return_indices=False, ceil_mode=False)

对输入的多通道信号执行二维最大池化操作。

最简单的情况下,对于输入大小为  ,输出大小为

,输出大小为 ,

,kernel_size为 的池化操作,此池化过程可表述如下:

的池化操作,此池化过程可表述如下:

padding 参数控制了要在输入信号的各维度各边上要补齐0的层数。

dilation 参数控制了池化核中各元素之间的距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。

kernel_size, stride, padding, dilation 等参数均支持以下类型输入:

- 一个单独的

int– 此时这个int会同时控制池化滑动窗的宽和高这两个维度的大小- 一个由两个

int组成的tuple– 这种情况下,高这一维度会采用元组中的第一个int数字,宽这一维度会采用第二个int数字。

Parameters:

- kernel_size – 最大池化操作的滑动窗大小

- stride – 滑动窗的步长,默认值是

kernel_size - padding – 要在输入信号的各维度各边上要补齐0的层数

- dilation – 滑动窗中各元素之间的距离

- return_indices – 如果此参数被设置为

True, 那么此池化层在返回输出信号的同时还会返回一连串滑动窗最大值的索引位置,即每个滑动窗的最大值位置信息。这些信息可以在后面的上采样torch.nn.MaxUnpool2d中被用到。 - ceil_mode – 如果此参数被设置为True,计算输出信号大小的时候,会使用向上取整,代替默认的向下取整的操作

Shape:

-

输入:

-

输出:

, 其中

, 其中

例子:

>>> # pool of square window of size=3, stride=2

>>> m = nn.MaxPool2d(3, stride=2)

>>> # pool of non-square window

>>> m = nn.MaxPool2d((3, 2), stride=(2, 1))

>>> input = torch.randn(20, 16, 50, 32)

>>> output = m(input)

MaxPool3d

class torch.nn.MaxPool3d(kernel_size, stride=None, padding=0, dilation=1, return_indices=False, ceil_mode=False)

对输入的多通道信号执行三维最大池化操作。

最简单的情况下,对于输入大小为  ,输出大小为

,输出大小为 ,

,kernel_size为  的池化操作,此池化过程可表述如下:

的池化操作,此池化过程可表述如下:

padding 参数控制了要在输入信号的各维度各边上要补齐0的层数。

dilation 参数控制了池化核中各元素之间的距离;这也被称为多孔算法(à trous algorithm)。这个概念有点难解释,这个链接link用可视化的方法很好地解释了dilation的作用。

kernel_size, stride, padding, dilation 等参数均支持以下类型输入:

- 一个单独的

int– 此时这个int会同时控制池化滑动窗的深度,宽和高这三个维度的大小- 一个由三个

int组成的tuple– 这种情况下,深度这一维度会采用元组中的第一个int数字,高这一维度会采用元组中的第二个int数字,宽这一维度会采用第三个int数字。

Parameters:

- kernel_size – 最大池化操作的滑动窗大小

- stride – 滑动窗的步长,默认值是

kernel_size - padding – 要在输入信号的各维度各边上要补齐0的层数

- dilation – 滑动窗中各元素之间的距离

- return_indices – 如果此参数被设置为

True, 那么此池化层在返回输出信号的同时还会返回一连串滑动窗最大值的索引位置,即每个滑动窗的最大值位置信息。这些信息可以在后面的上采样torch.nn.MaxUnpool3d中被用到。 - ceil_mode – 如果此参数被设置为True,计算输出信号大小的时候,会使用向上取整,代替默认的向下取整的操作

Shape:

-

输入:

-

输出:

, 其中

, 其中

例子:

>>> # pool of square window of size=3, stride=2

>>> m = nn.MaxPool3d(3, stride=2)

>>> # pool of non-square window

>>> m = nn.MaxPool3d((3, 2, 2), stride=(2, 1, 2))

>>> input = torch.randn(20, 16, 50,44, 31)

>>> output = m(input)

MaxUnpool1d

class torch.nn.MaxUnpool1d(kernel_size, stride=None, padding=0)

MaxPool1d的逆过程,不过并不是完全的逆过程,因为在MaxPool1d的过程中,池化窗区域内的非最大值都已经丢失。 MaxUnpool1d的输入是MaxPool1d的输出,其中也包括包括滑动窗最大值的索引(即return_indices所控制的输出),逆池化操作的过程就是将MaxPool1d过程中产生的最大值插回到原来的位置,并将非最大值区域置为0。

Note

MaxPool1d操作可以将多个大小不同的输入映射到相同的输出大小。因此,池化操作的反过程,MaxUnpool1d的上采样过程的输出大小就不唯一了。为了适应这一点,可以在设置控制上采样输出大小的(output_size)参数。 具体用法,请参阅下面的输入和示例

Parameters:

- kernel_size (int or tuple) – 最大池化窗的大小

- stride (int or tuple) – 最大池化窗的步长。默认

kernel_size - padding (int or tuple) – 输入信号的各维度各边要补齐0的层数

Inputs:

input: 要执行上采样操作的张量indices:MaxPool1d池化过程中输出的池化窗最大值的位置索引output_size(选填): 指定的输出大小

Shape:

-

输入:

-

输出:

, 其中

, 其中

也可以使用

output_size指定输出的大小

例子:

>>> pool = nn.MaxPool1d(2, stride=2, return_indices=True)

>>> unpool = nn.MaxUnpool1d(2, stride=2)

>>> input = torch.tensor([[[1., 2, 3, 4, 5, 6, 7, 8]]])

>>> output, indices = pool(input)

>>> unpool(output, indices)

tensor([[[ 0., 2., 0., 4., 0., 6., 0., 8.]]])

>>> # Example showcasing the use of output_size

>>> input = torch.tensor([[[1., 2, 3, 4, 5, 6, 7, 8, 9]]])

>>> output, indices = pool(input)

>>> unpool(output, indices, output_size=input.size())

tensor([[[ 0., 2., 0., 4., 0., 6., 0., 8., 0.]]])

>>> unpool(output, indices)

tensor([[[ 0., 2., 0., 4., 0., 6., 0., 8.]]])

MaxUnpool2d

class torch.nn.MaxUnpool2d(kernel_size, stride=None, padding=0)

MaxPool2d的逆过程,不过并不是完全的逆过程,因为在MaxPool2d的过程中,池化窗区域内的非最大值都已经丢失。 MaxUnpool2d的输入是MaxPool2d的输出,其中也包括包括滑动窗最大值的索引(即return_indices所控制的输出),逆池化操作的过程就是将MaxPool2d过程中产生的最大值插回到原来的位置,并将非最大值区域置为0。

Note

MaxPool2d操作可以将多个大小不同的输入映射到相同的输出大小。因此,池化操作的反过程,MaxUnpool2d的上采样过程的输出大小就不唯一了。为了适应这一点,可以在设置控制上采样输出大小的(output_size)参数。 具体用法,请参阅下面的输入和示例

Parameters:

- kernel_size (int or tuple) – 最大池化窗的大小

- stride (int or tuple) – 最大池化窗的步长。默认

kernel_size - padding (int or tuple) – 输入信号的各维度各边要补齐0的层数

Inputs:

input: 要执行上采样操作的张量indices:MaxPool2d池化过程中输出的池化窗最大值的位置索引output_size(选填): 指定的输出大小

Shape:

-

输入:

-

输出:

, 其中

, 其中

也可以使用

output_size指定输出的大小

例子:

>>> pool = nn.MaxPool2d(2, stride=2, return_indices=True)

>>> unpool = nn.MaxUnpool2d(2, stride=2)

>>> input = torch.tensor([[[[ 1., 2, 3, 4],

[ 5, 6, 7, 8],

[ 9, 10, 11, 12],

[13, 14, 15, 16]]]])

>>> output, indices = pool(input)

>>> unpool(output, indices)

tensor([[[[ 0., 0., 0., 0.],

[ 0., 6., 0., 8.],

[ 0., 0., 0., 0.],

[ 0., 14., 0., 16.]]]])

>>> # specify a different output size than input size

>>> unpool(output, indices, output_size=torch.Size([1, 1, 5, 5]))

tensor([[[[ 0., 0., 0., 0., 0.],

[ 6., 0., 8., 0., 0.],

[ 0., 0., 0., 14., 0.],

[ 16., 0., 0., 0., 0.],

[ 0., 0., 0., 0., 0.]]]])

MaxUnpool3d

class torch.nn.MaxUnpool3d(kernel_size, stride=None, padding=0)

MaxPool3d的逆过程,不过并不是完全的逆过程,因为在MaxPool3d的过程中,池化窗区域内的非最大值都已经丢失。 MaxUnpool3d的输入是MaxPool3d的输出,其中也包括包括滑动窗最大值的索引(即return_indices所控制的输出),逆池化操作的过程就是将MaxPool3d过程中产生的最大值插回到原来的位置,并将非最大值区域置为0。

Note

MaxPool3d操作可以将多个大小不同的输入映射到相同的输出大小。因此,池化操作的反过程,MaxUnpool3d的上采样过程的输出大小就不唯一了。为了适应这一点,可以在设置控制上采样输出大小的(output_size)参数。 具体用法,请参阅下面的输入和示例

Parameters:

- kernel_size (int or tuple) – 最大池化窗的大小

- stride (int or tuple) – 最大池化窗的步长。默认

kernel_size - padding (int or tuple) – 输入信号的各维度各边要补齐0的层数

Inputs:

input: 要执行上采样操作的张量indices:MaxPool3d池化过程中输出的池化窗最大值的位置索引output_size(选填): 指定的输出大小

Shape:

-

输入:

-

输出:

, 其中

, 其中

也可以使用

output_size指定输出的大小

例子:

>>> # pool of square window of size=3, stride=2

>>> pool = nn.MaxPool3d(3, stride=2, return_indices=True)

>>> unpool = nn.MaxUnpool3d(3, stride=2)

>>> output, indices = pool(torch.randn(20, 16, 51, 33, 15))

>>> unpooled_output = unpool(output, indices)

>>> unpooled_output.size()

torch.Size([20, 16, 51, 33, 15])

AvgPool1d

class torch.nn.AvgPool1d(kernel_size, stride=None, padding=0, ceil_mode=False, count_include_pad=True)

对输入的多通道信号执行一维平均池化操作。

最简单的情况下,对于输入大小为  ,输出大小为

,输出大小为 ,

,kernel_size为 的池化操作,此池化过程可表述如下:

的池化操作,此池化过程可表述如下:

padding 参数控制了要在输入信号的各维度各边上要补齐0的层数。

kernel_size, stride, padding, dilation 等参数均支持输入一个int或者由一个int组成的tuple。

Parameters:

- kernel_size – 平均池化操作的滑动窗大小

- stride – 滑动窗的步长,默认值是

kernel_size - padding – 要在输入信号的各维度各边上要补齐0的层数

- ceil_mode – 如果此参数被设置为True,计算输出信号大小的时候,会使用向上取整,代替默认的向下取整的操作

- count_include_pad – 如果被设置为True, 那么在进行平均运算的时候也会将用于补齐的0加入运算。

Shape:

-

输入:

-

输出:

, 其中

, 其中

例子:

>>> # pool with window of size=3, stride=2

>>> m = nn.AvgPool1d(3, stride=2)

>>> m(torch.tensor([[[1.,2,3,4,5,6,7]]]))

tensor([[[ 2., 4., 6.]]])

AvgPool2d

class torch.nn.AvgPool2d(kernel_size, stride=None, padding=0, ceil_mode=False, count_include_pad=True)

对输入的多通道信号执行二维平均池化操作。

最简单的情况下,对于输入大小为  ,输出大小为

,输出大小为 ,

,kernel_size为 的池化操作,此池化过程可表述如下:

的池化操作,此池化过程可表述如下:

padding 参数控制了要在输入信号的各维度各边上要补齐0的层数。

kernel_size, stride, padding等参数均支持以下类型输入:

- 一个单独的

int– 此时这个int会同时控制池化滑动窗的宽和高这两个维度的大小- 一个由两个

int组成的tuple– 这种情况下,高这一维度会采用元组中的第一个int数字,宽这一维度会采用第二个int数字。

Parameters:

- kernel_size – 平均池化操作的滑动窗大小

- stride – 滑动窗的步长,默认值是

kernel_size - padding – 要在输入信号的各维度各边上要补齐0的层数

- ceil_mode – 如果此参数被设置为True,计算输出信号大小的时候,会使用向上取整,代替默认的向下取整的操作

- count_include_pad – 如果被设置为True, 那么在进行平均运算的时候也会将用于补齐的0加入运算。

Shape:

-

输入:

-

输出:

, 其中

, 其中

例子:

>>> # pool of square window of size=3, stride=2

>>> m = nn.AvgPool2d(3, stride=2)

>>> # pool of non-square window

>>> m = nn.AvgPool2d((3, 2), stride=(2, 1))

>>> input = torch.randn(20, 16, 50, 32)

>>> output = m(input)

AvgPool3d

class torch.nn.AvgPool3d(kernel_size, stride=None, padding=0, ceil_mode=False, count_include_pad=True)

对输入的多通道信号执行三维平均池化操作。

最简单的情况下,对于输入大小为 ,输出大小为

,输出大小为 ,

,kernel_size为 的池化操作,此池化过程可表述如下:

的池化操作,此池化过程可表述如下:

padding 参数控制了要在输入信号的各维度各边上要补齐0的层数。

kernel_size, stride, padding等参数均支持以下类型输入:

- 一个单独的

int– 此时这个int会同时控制池化滑动窗的深度,宽和高这两个维度的大小- 一个由三个

int组成的tuple– 这种情况下,深度这一维度会采用元组中的第一个int数字,高这一维度会采用元组中的第二个int数字,宽这一维度会采用第三个int数字。

Parameters:

- kernel_size – 平均池化操作的滑动窗大小

- stride – 滑动窗的步长,默认值是

kernel_size - padding – 要在输入信号的各维度各边上要补齐0的层数

- ceil_mode – 如果此参数被设置为True,计算输出信号大小的时候,会使用向上取整,代替默认的向下取整的操作

- count_include_pad – 如果被设置为True, 那么在进行平均运算的时候也会将用于补齐的0加入运算。

Shape:

-

输入:

-

输出:

, 其中

, 其中

例子:

>>> # pool of square window of size=3, stride=2

>>> m = nn.AvgPool3d(3, stride=2)

>>> # pool of non-square window

>>> m = nn.AvgPool3d((3, 2, 2), stride=(2, 1, 2))

>>> input = torch.randn(20, 16, 50,44, 31)

>>> output = m(input)

FractionalMaxPool2d

class torch.nn.FractionalMaxPool2d(kernel_size, output_size=None, output_ratio=None, return_indices=False, _random_samples=None)

对输入的多通道信号执行小数级二维最大池化操作。小数级指的是此操作的输出大小与输入大小成指定的小数倍数关系。

Ben Graham的这篇文章Fractional MaxPooling中详细地介绍了小数级二维最大池化的基本思想和技术细节。

小数级二维最大池化的基本思想就是将最大池化操作应用于 个由随机步长大小采集的区域中,这些步长大小是由输出目标的大小决定的。小数级二维最大池化的输出特征的数量等于输入通道的数量。

个由随机步长大小采集的区域中,这些步长大小是由输出目标的大小决定的。小数级二维最大池化的输出特征的数量等于输入通道的数量。

Parameters:

- kernel_size – 执行最大操作的窗口大小。支持的数据类型包括一个单独的数字k(生成一个大小为k x k的正方形kernal),或者一个元组

(kh x kw) - output_size – 池化输出目标大小,具体形式是

oH x oW。支持的数据类型包括一个单独的数字oH,或者一个元组(oH, oW),注意此处oH x oW与kernal_size中的kh x ow相呼应,两者成一定的小数级倍数关系 - output_ratio – 如果想让输出目标的大小是输入目标大小的ratio倍,可以通过设置此参数来实现。此参数可以是一个小数数字或者小数元组,数字范围是(0, 1)

- return_indices – 如果此参数设置为

True, 那么在池化操作结束后,返回池化输出结果的同时也会返回每个池化区域中,最大值的位置信息。这些信息在nn.MaxUnpool2d()可以被用到。此参数默认为False

例子

>>> # pool of square window of size=3, and target output size 13x12

>>> m = nn.FractionalMaxPool2d(3, output_size=(13, 12))

>>> # pool of square window and target output size being half of input image size

>>> m = nn.FractionalMaxPool2d(3, output_ratio=(0.5, 0.5))

>>> input = torch.randn(20, 16, 50, 32)

>>> output = m(input)

LPPool1d

class torch.nn.LPPool1d(norm_type, kernel_size, stride=None, ceil_mode=False)

对输入的多通道信号执行一维幂平均池化操作。

对于每个池化窗口,此池化操作的计算方式如下:

- 当p为无穷大的时候时,等价于最大池化操作

- 当

p=1时,等价于求和池化操作(一定程度上等价于平均池化)

Note

如果某个特殊的输入导致这个输入关于幂指数p的求和是0,那上述池化函数在这一点是没有意义的。在实际实现过程中,此点的梯度被设置为0。

Parameters:

- kernel_size: 池化窗口的大小

- stride:池化窗口移动的步长。默认值是

kernel_size - ceil_mode: 当此参数被设置为

True时,在计算输出大小的时候将使用向下取整代替向上取整

Shape:

-

输入:

-

输出:

,其中

,其中

例子:

>>> # power-2 pool of window of length 3, with stride 2.

>>> m = nn.LPPool1d(2, 3, stride=2)

>>> input = torch.randn(20, 16, 50)

>>> output = m(input)

LPPool2d

class torch.nn.LPPool2d(norm_type, kernel_size, stride=None, ceil_mode=False)

对输入的多通道信号执行二维幂平均池化操作。

对于每个池化窗口,此池化操作的计算方式如下:

- 当p等于

时候时,等价于最大池化操作

时候时,等价于最大池化操作 - 当

p=1时,等价于求和池化操作(一定程度上等价于平均池化)

参数kernel_size, stride支持的数据类型:

int,池化窗口的宽和高相等tuple数组(两个数字的),第一个元素是池化窗口的高,第二个是宽

Note

如果某个特殊的输入导致这个输入关于幂指数p的求和是0,那上述池化函数在这一点是没有意义的。在实际实现过程中,此点的梯度被设置为0。

Parameters:

- kernel_size: 池化窗口的大小

- stride:池化窗口移动的步长。默认值是

kernel_size - ceil_mode: 当此参数被设置为

True时,在计算输出大小的时候将使用向下取整代替向上取整

Shape:

-

输入:

-

输出:

, 其中

, 其中

例子:

>>> # power-2 pool of square window of size=3, stride=2

>>> m = nn.LPPool2d(2, 3, stride=2)

>>> # pool of non-square window of power 1.2

>>> m = nn.LPPool2d(1.2, (3, 2), stride=(2, 1))

>>> input = torch.randn(20, 16, 50, 32)

>>> output = m(input)

AdaptiveMaxPool1d

class torch.nn.AdaptiveMaxPool1d(output_size, return_indices=False)

对输入的多通道信号进行1维的自适应最大池化操作。

此池化层可以通过指定输出大小H,将任意输入大小的输入强行的池化到指定的输出大小。不过输入和输出特征的通道数不会变化。

Parameters:

- output_size – 指定的输出大小H

- return_indices – 如果此参数设置为

True, 那么在池化操作结束后,返回池化输出结果的同时也会返回每个池化区域中,最大值的位置信息。这些信息在nn.MaxUnpool1d()可以被用到。此参数默认为False

例子

>>> # target output size of 5

>>> m = nn.AdaptiveMaxPool1d(5)

>>> input = torch.randn(1, 64, 8)

>>> output = m(input)

AdaptiveMaxPool2d

class torch.nn.AdaptiveMaxPool2d(output_size, return_indices=False)

对输入的多通道信号进行2维的自适应最大池化操作。

此池化层可以通过指定输出大小H x W,将任意输入大小的输入强行的池化到指定的输出大小。不过输入和输出特征的通道数不会变化。

Parameters:

- output_size – 指定的输出大小H x W。此参数支持的数据类型可以是一个元组(H, W),又或者是一个单独的

intH(等价于H x H)。H 和 W这两个参数支持输入一个int又或者是None,None表示此输出维度的大小等价于输入数据此维度的大小 - return_indices – 如果此参数设置为

True, 那么在池化操作结束后,返回池化输出结果的同时也会返回每个池化区域中,最大值的位置信息。这些信息在nn.MaxUnpool2d()可以被用到。此参数默认为False

例子

>>> # target output size of 5x7

>>> m = nn.AdaptiveMaxPool2d((5,7))

>>> input = torch.randn(1, 64, 8, 9)

>>> output = m(input)

>>> # target output size of 7x7 (square)

>>> m = nn.AdaptiveMaxPool2d(7)

>>> input = torch.randn(1, 64, 10, 9)

>>> output = m(input)

>>> # target output size of 10x7

>>> m = nn.AdaptiveMaxPool2d((None, 7))

>>> input = torch.randn(1, 64, 10, 9)

>>> output = m(input)

AdaptiveMaxPool3d

class torch.nn.AdaptiveMaxPool3d(output_size, return_indices=False)

对输入的多通道信号进行3维的自适应最大池化操作。

此池化层可以通过指定输出大小D x H x W,将任意输入大小的输入强行的池化到指定的输出大小。不过输入和输出特征的通道数不会变化。

Parameters:

- output_size – 指定的输出大小D x H x W。此参数支持的数据类型可以是一个元组(D, H, W),又或者是一个单独的

intD(等价于D x D x D)。D, H 和 W这三个参数支持输入一个int又或者是None,None表示此输出维度的大小等价于输入数据此维度的大小 - return_indices – 如果此参数设置为

True, 那么在池化操作结束后,返回池化输出结果的同时也会返回每个池化区域中,最大值的位置信息。这些信息在nn.MaxUnpool3d()可以被用到。此参数默认为False

例子

>>> # target output size of 5x7x9

>>> m = nn.AdaptiveMaxPool3d((5,7,9))

>>> input = torch.randn(1, 64, 8, 9, 10)

>>> output = m(input)

>>> # target output size of 7x7x7 (cube)

>>> m = nn.AdaptiveMaxPool3d(7)

>>> input = torch.randn(1, 64, 10, 9, 8)

>>> output = m(input)

>>> # target output size of 7x9x8

>>> m = nn.AdaptiveMaxPool3d((7, None, None))

>>> input = torch.randn(1, 64, 10, 9, 8)

>>> output = m(input)

AdaptiveAvgPool1d

class torch.nn.AdaptiveAvgPool1d(output_size)

对输入的多通道信号进行1维的自适应平均池化操作。

此池化层可以通过指定输出大小H,将任意输入大小的输入强行的池化到指定的输出大小。不过输入和输出特征的通道数不会变化。

Parameters:

- output_size – 指定的输出大小H

例子

>>> # target output size of 5

>>> m = nn.AdaptiveAvgPool1d(5)

>>> input = torch.randn(1, 64, 8)

>>> output = m(input)

AdaptiveAvgPool2d

class torch.nn.AdaptiveAvgPool2d(output_size)

对输入的多通道信号进行2维的自适应平均池化操作。

此池化层可以通过指定输出大小H x W,将任意输入大小的输入强行的池化到指定的输出大小。不过输入和输出特征的通道数不会变化。

Parameters:

- output_size – 指定的输出大小H x W。此参数支持的数据类型可以是一个元组(H, W),又或者是一个单独的

intH(等价于H x H)。H 和 W这两个参数支持输入一个int又或者是None,None表示此输出维度的大小等价于输入数据此维度的大小

例子

>>> # target output size of 5x7

>>> m = nn.AdaptiveAvgPool2d((5,7))

>>> input = torch.randn(1, 64, 8, 9)

>>> output = m(input)

>>> # target output size of 7x7 (square)

>>> m = nn.AdaptiveAvgPool2d(7)

>>> input = torch.randn(1, 64, 10, 9)

>>> output = m(input)

>>> # target output size of 10x7

>>> m = nn.AdaptiveMaxPool2d((None, 7))

>>> input = torch.randn(1, 64, 10, 9)

>>> output = m(input)

AdaptiveAvgPool3d

class torch.nn.AdaptiveAvgPool3d(output_size)

对输入的多通道信号进行3维的自适应平均池化操作。

此池化层可以通过指定输出大小D x H x W,将任意输入大小的输入强行的池化到指定的输出大小。不过输入和输出特征的通道数不会变化。

Parameters:

- output_size – 指定的输出大小D x H x W。此参数支持的数据类型可以是一个元组(D, H, W),又或者是一个单独的

intD(等价于D x D x D)。D, H 和 W这三个参数支持输入一个int又或者是None,None表示此输出维度的大小等价于输入数据此维度的大小

例子

>>> # target output size of 5x7x9

>>> m = nn.AdaptiveAvgPool3d((5,7,9))

>>> input = torch.randn(1, 64, 8, 9, 10)

>>> output = m(input)

>>> # target output size of 7x7x7 (cube)

>>> m = nn.AdaptiveAvgPool3d(7)

>>> input = torch.randn(1, 64, 10, 9, 8)

>>> output = m(input)

>>> # target output size of 7x9x8

>>> m = nn.AdaptiveMaxPool3d((7, None, None))

>>> input = torch.randn(1, 64, 10, 9, 8)

>>> output = m(input)

填充层(Padding layers)

ReflectionPad1d

class torch.nn.ReflectionPad1d(padding)

以输入张量的各边界为轴,通过对输入张量数据的进行镜像复制的方式来对输入张量进行填充操作。

对于N维的填充操作,调用torch.nn.functional.pad()。

| Parameters: | padding (int, tuple) – 要填充的范围大小。如果输入数据是一个int, 那各个边界上都会填充同样大小的数据。如果是一个两个元素的元组,那么按照 ( , ,  )的大小设定来在各边上填充。 )的大小设定来在各边上填充。 |

|---|---|

Shape:

- 输入:

- 输出:

其中

其中

示例:

>>> m = nn.ReflectionPad1d(2)

>>> input = torch.arange(8, dtype=torch.float).reshape(1, 2, 4)

>>> input

tensor([[[0., 1., 2., 3.],

[4., 5., 6., 7.]]])

>>> m(input)

tensor([[[2., 1., 0., 1., 2., 3., 2., 1.],

[6., 5., 4., 5., 6., 7., 6., 5.]]])

>>> m(input)

tensor([[[2., 1., 0., 1., 2., 3., 2., 1.],

[6., 5., 4., 5., 6., 7., 6., 5.]]])

>>> # using different paddings for different sides

>>> m = nn.ReflectionPad1d((3, 1))

>>> m(input)

tensor([[[3., 2., 1., 0., 1., 2., 3., 2.],

[7., 6., 5., 4., 5., 6., 7., 6.]]])

ReflectionPad2d

class torch.nn.ReflectionPad2d(padding)

以输入张量的各边界为轴,通过对输入张量数据的进行镜像复制的方式来对输入张量进行填充操作。

对于N维的填充操作,调用torch.nn.functional.pad()。

| Parameters: | padding (int, tuple) – 要填充的范围大小。如果输入数据是一个int, 那各个边界上都会填充同样大小的数据。如果是一个四个元素的元组,那么按照( , ,  , ,  , ,  )的大小设定来在各边上填充。 )的大小设定来在各边上填充。 |

|---|---|

Shape:

-

输入:

-

输出:

其中

其中

示例:

>>> m = nn.ReflectionPad2d(2)

>>> input = torch.arange(9, dtype=torch.float).reshape(1, 1, 3, 3)

>>> input

tensor([[[[0., 1., 2.],

[3., 4., 5.],

[6., 7., 8.]]]])

>>> m(input)

tensor([[[[8., 7., 6., 7., 8., 7., 6.],

[5., 4., 3., 4., 5., 4., 3.],

[2., 1., 0., 1., 2., 1., 0.],

[5., 4., 3., 4., 5., 4., 3.],

[8., 7., 6., 7., 8., 7., 6.],

[5., 4., 3., 4., 5., 4., 3.],

[2., 1., 0., 1., 2., 1., 0.]]]])

>>> # using different paddings for different sides

>>> m = nn.ReflectionPad2d((1, 1, 2, 0))

>>> m(input)

tensor([[[[7., 6., 7., 8., 7.],

[4., 3., 4., 5., 4.],

[1., 0., 1., 2., 1.],

[4., 3., 4., 5., 4.],

[7., 6., 7., 8., 7.]]]])

ReplicationPad1d

class torch.nn.ReplicationPad1d(padding)

通过复制输入张量边界元素的方式对输入张量进行填充操作。

对于N维的填充操作,调用torch.nn.functional.pad()。

| Parameters: | padding (int, tuple) – 要填充的范围大小。如果输入数据是一个int, 那各个边界上都会填充同样大小的数据。如果是一个两个元素的元组,那么按照 ( , ,  )的大小设定来在各边上填充。 )的大小设定来在各边上填充。 |

|---|---|

Shape:

- 输入:

- 输出:

其中

其中

示例:

>>> m = nn.ReplicationPad1d(2)

>>> input = torch.arange(8, dtype=torch.float).reshape(1, 2, 4)

>>> input

tensor([[[0., 1., 2., 3.],

[4., 5., 6., 7.]]])

>>> m(input)

tensor([[[0., 0., 0., 1., 2., 3., 3., 3.],

[4., 4., 4., 5., 6., 7., 7., 7.]]])

>>> # using different paddings for different sides

>>> m = nn.ReplicationPad1d((3, 1))

>>> m(input)

tensor([[[0., 0., 0., 0., 1., 2., 3., 3.],

[4., 4., 4., 4., 5., 6., 7., 7.]]])

ReplicationPad2d

class torch.nn.ReplicationPad2d(padding)

通过复制输入张量边界元素的方式对输入张量进行填充操作。

对于N维的填充操作,调用torch.nn.functional.pad()。

| Parameters: | padding (int, tuple) – 要填充的范围大小。如果输入数据是一个int, 那各个边界上都会填充同样大小的数据。如果是一个四个元素的元组,那么按照( , ,  , ,  , ,  )的大小设定来在各边上填充。 )的大小设定来在各边上填充。 |

|---|---|

Shape:

- 输入:

- 输出:

其中

其中

示例:

>>> m = nn.ReplicationPad2d(2)

>>> input = torch.arange(9, dtype=torch.float).reshape(1, 1, 3, 3)

>>> input

tensor([[[[0., 1., 2.],

[3., 4., 5.],

[6., 7., 8.]]]])

>>> m(input)

tensor([[[[0., 0., 0., 1., 2., 2., 2.],

[0., 0., 0., 1., 2., 2., 2.],

[0., 0., 0., 1., 2., 2., 2.],

[3., 3., 3., 4., 5., 5., 5.],

[6., 6., 6., 7., 8., 8., 8.],

[6., 6., 6., 7., 8., 8., 8.],

[6., 6., 6., 7., 8., 8., 8.]]]])

>>> # using different paddings for different sides

>>> m = nn.ReplicationPad2d((1, 1, 2, 0))

>>> m(input)

tensor([[[[0., 0., 1., 2., 2.],

[0., 0., 1., 2., 2.],

[0., 0., 1., 2., 2.],

[3., 3., 4., 5., 5.],

[6., 6., 7., 8., 8.]]]])

ReplicationPad3d

class torch.nn.ReplicationPad3d(padding)

通过复制输入张量边界元素的方式对输入张量进行填充操作。

对于N维的填充操作,调用torch.nn.functional.pad()。

| Parameters: | padding (int, tuple) – 要填充的范围大小。如果输入数据是一个int, 那各个边界上都会填充同样大小的数据。如果是一个六个元素的元组,那么按照( , ,  , ,  , ,  , ,  , ,  )的大小设定来在各边上填充。 )的大小设定来在各边上填充。 |

|---|---|

Shape:

- 输入:

- 输出:

其中

其中

示例:

>>> m = nn.ReplicationPad3d(3)

>>> input = torch.randn(16, 3, 8, 320, 480)

>>> output = m(input)

>>> # using different paddings for different sides

>>> m = nn.ReplicationPad3d((3, 3, 6, 6, 1, 1))

>>> output = m(input)

ZeroPad2d

class torch.nn.ZeroPad2d(padding)

通过在各边上填充0的方式对输入张量进行填充操作。

对于N维的填充操作,调用torch.nn.functional.pad()。

| Parameters: | padding (int, tuple) – 要填充的范围大小。如果输入数据是一个int, 那各个边界上都会填充同样大小的数据。如果是一个四个元素的元组,那么按照( , ,  , ,  , ,  )的大小设定来在各边上填充。 )的大小设定来在各边上填充。 |

|---|---|

Shape:

- 输入:

- 输出:

其中

其中

示例:

>>> m = nn.ZeroPad2d(2)

>>> input = torch.randn(1, 1, 3, 3)

>>> input

tensor([[[[-0.1678, -0.4418, 1.9466],

[ 0.9604, -0.4219, -0.5241],

[-0.9162, -0.5436, -0.6446]]]])

>>> m(input)

tensor([[[[ 0.0000, 0.0000, 0.0000, 0.0000, 0.0000, 0.0000, 0.0000],

[ 0.0000, 0.0000, 0.0000, 0.0000, 0.0000, 0.0000, 0.0000],

[ 0.0000, 0.0000, -0.1678, -0.4418, 1.9466, 0.0000, 0.0000],

[ 0.0000, 0.0000, 0.9604, -0.4219, -0.5241, 0.0000, 0.0000],

[ 0.0000, 0.0000, -0.9162, -0.5436, -0.6446, 0.0000, 0.0000],

[ 0.0000, 0.0000, 0.0000, 0.0000, 0.0000, 0.0000, 0.0000],

[ 0.0000, 0.0000, 0.0000, 0.0000, 0.0000, 0.0000, 0.0000]]]])

>>> # using different paddings for different sides

>>> m = nn.ZeroPad2d((1, 1, 2, 0))

>>> m(input)

tensor([[[[ 0.0000, 0.0000, 0.0000, 0.0000, 0.0000],

[ 0.0000, 0.0000, 0.0000, 0.0000, 0.0000],

[ 0.0000, -0.1678, -0.4418, 1.9466, 0.0000],

[ 0.0000, 0.9604, -0.4219, -0.5241, 0.0000],

[ 0.0000, -0.9162, -0.5436, -0.6446, 0.0000]]]])

ConstantPad1d

class torch.nn.ConstantPad1d(padding, value)

通过在各边上填充固定数字的方式对输入张量进行填充操作

对于N维的填充操作,调用torch.nn.functional.pad()。

| Parameters: | padding (int, tuple) – 要填充的范围大小。如果输入数据是一个int, 那各个边界上都会填充同样大小的数据。如果是一个两个元素的元组,那么按照 ( , ,  )的大小设定来在各边上填充。 )的大小设定来在各边上填充。 |

|---|---|

Shape:

- 输入:

- 输出:

其中

其中

示例:

>>> m = nn.ConstantPad1d(2, 3.5)

>>> input = torch.randn(1, 2, 4)

>>> input

tensor([[[-1.0491, -0.7152, -0.0749, 0.8530],

[-1.3287, 1.8966, 0.1466, -0.2771]]])

>>> m(input)

tensor([[[ 3.5000, 3.5000, -1.0491, -0.7152, -0.0749, 0.8530, 3.5000,

3.5000],

[ 3.5000, 3.5000, -1.3287, 1.8966, 0.1466, -0.2771, 3.5000,

3.5000]]])

>>> m = nn.ConstantPad1d(2, 3.5)

>>> input = torch.randn(1, 2, 3)

>>> input

tensor([[[ 1.6616, 1.4523, -1.1255],

[-3.6372, 0.1182, -1.8652]]])

>>> m(input)

tensor([[[ 3.5000, 3.5000, 1.6616, 1.4523, -1.1255, 3.5000, 3.5000],

[ 3.5000, 3.5000, -3.6372, 0.1182, -1.8652, 3.5000, 3.5000]]])

>>> # using different paddings for different sides

>>> m = nn.ConstantPad1d((3, 1), 3.5)

>>> m(input)

tensor([[[ 3.5000, 3.5000, 3.5000, 1.6616, 1.4523, -1.1255, 3.5000],

[ 3.5000, 3.5000, 3.5000, -3.6372, 0.1182, -1.8652, 3.5000]]])

ConstantPad2d

class torch.nn.ConstantPad2d(padding, value)

通过在各边上填充固定数字的方式对输入张量进行填充操作

对于N维的填充操作,调用torch.nn.functional.pad()。

| Parameters: | padding (int, tuple) – 要填充的范围大小。如果输入数据是一个int, 那各个边界上都会填充同样大小的数据。如果是一个四个元素的元组,那么按照( , ,  , ,  , ,  )的大小设定来在各边上填充。 )的大小设定来在各边上填充。 |

|---|---|

Shape:

- 输入:

- 输出:

其中

其中

示例:

>>> m = nn.ConstantPad2d(2, 3.5)

>>> input = torch.randn(1, 2, 2)

>>> input

tensor([[[ 1.6585, 0.4320],

[-0.8701, -0.4649]]])

>>> m(input)

tensor([[[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000, 3.5000],

[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000, 3.5000],

[ 3.5000, 3.5000, 1.6585, 0.4320, 3.5000, 3.5000],

[ 3.5000, 3.5000, -0.8701, -0.4649, 3.5000, 3.5000],

[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000, 3.5000],

[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000, 3.5000]]])

>>> m(input)

tensor([[[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000, 3.5000],

[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000, 3.5000],

[ 3.5000, 3.5000, 1.6585, 0.4320, 3.5000, 3.5000],

[ 3.5000, 3.5000, -0.8701, -0.4649, 3.5000, 3.5000],

[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000, 3.5000],

[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000, 3.5000]]])

>>> # using different paddings for different sides

>>> m = nn.ConstantPad2d((3, 0, 2, 1), 3.5)

>>> m(input)

tensor([[[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000],

[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000],

[ 3.5000, 3.5000, 3.5000, 1.6585, 0.4320],

[ 3.5000, 3.5000, 3.5000, -0.8701, -0.4649],

[ 3.5000, 3.5000, 3.5000, 3.5000, 3.5000]]])

ConstantPad3d

class torch.nn.ConstantPad3d(padding, value)

通过在各边上填充固定数字的方式对输入张量进行填充操作

对于N维的填充操作,调用torch.nn.functional.pad()。

| Parameters: | padding (int, tuple) – 要填充的范围大小。如果输入数据是一个int, 那各个边界上都会填充同样大小的数据。如果是一个六个元素的元组,那么按照( , ,  , ,  , ,  , ,  , ,  )的大小设定来在各边上填充。 )的大小设定来在各边上填充。 |

|---|---|

Shape:

- 输入:

- 输出:

其中

其中

示例:

>>> m = nn.ConstantPad3d(3, 3.5)

>>> input = torch.randn(16, 3, 10, 20, 30)

>>> output = m(input)

>>> # using different paddings for different sides

>>> m = nn.ConstantPad3d((3, 3, 6, 6, 0, 1), 3.5)

>>> output = m(input)

非线性激活(加权求和,非线性) ( Non-linear activations (weighted sum, nonlinearity))

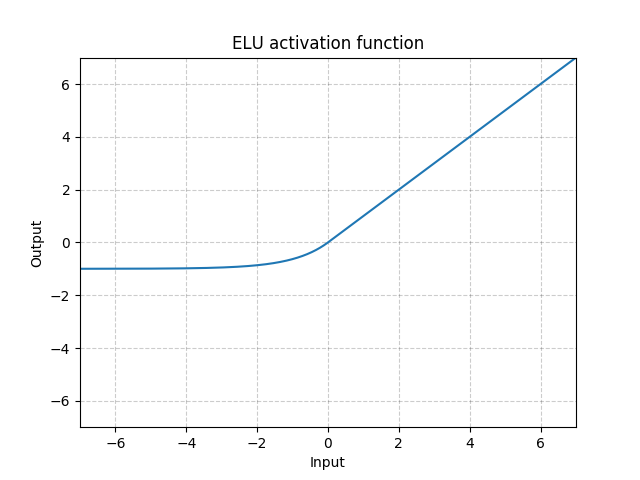

ELU

class torch.nn.ELU(alpha=1.0, inplace=False)

将下面的元素级函数应用到输入张量上:

Parameters:

- alpha – ELU操作的

值。 默认: 1.0

值。 默认: 1.0 - inplace – 是否进行原位操作。 默认:

False

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.ELU()

>>> input = torch.randn(2)

>>> output = m(input)

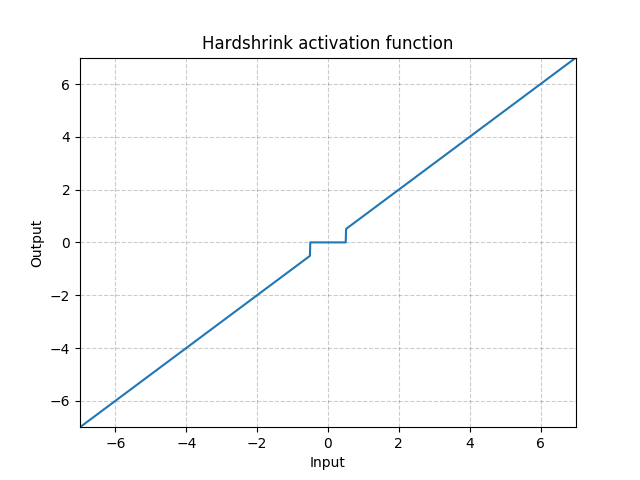

Hardshrink

class torch.nn.Hardshrink(lambd=0.5)

将下面的元素级hard shrinkage函数应用到输入张量上:

| Parameters: | lambd – Hardshrink运算中的  值。 默认: 0.5 值。 默认: 0.5 |

|---|---|

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.Hardshrink()

>>> input = torch.randn(2)

>>> output = m(input)

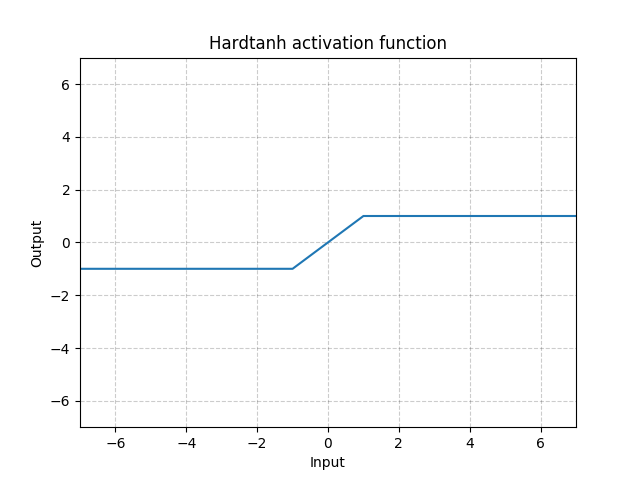

Hardtanh

class torch.nn.Hardtanh(min_val=-1.0, max_val=1.0, inplace=False, min_value=None, max_value=None)

将下面的元素级HardTanh函数应用到输入张量上。

HardTanh函数定义如下:

线性区域 的大小可以通过设置

的大小可以通过设置min_val 参数 max_val来进行调整。

参数:

- min_val – 线性区域的下限. 默认: -1

- max_val – 线性区域的上限. 默认: 1

- inplace – 是否进行原位操作。 默认:

False

之前版本的min_value 和 max_value 参数已经被废弃掉了,改为min_val 和 max_val参数。

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.Hardtanh(-2, 2)

>>> input = torch.randn(2)

>>> output = m(input)

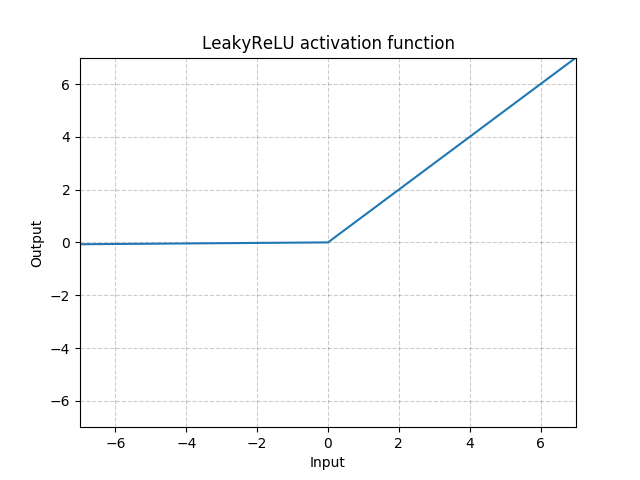

LeakyReLU

class torch.nn.LeakyReLU(negative_slope=0.01, inplace=False)

将下面的元素级函数应用到输入张量上:

或

Parameters:

- negative_slope – 控制负数范围函数的斜率。 默认: 1e-2

- inplace – 是否进行原位操作。 默认:

False

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.LeakyReLU(0.1)

>>> input = torch.randn(2)

>>> output = m(input)

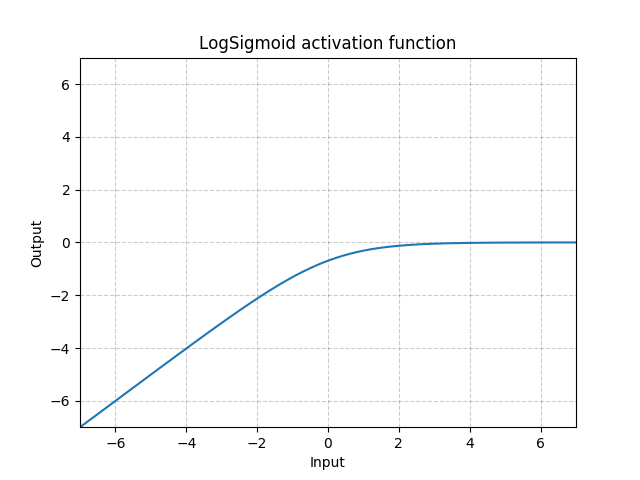

LogSigmoid

class torch.nn.LogSigmoid

将下面的元素级函数LogSigmoid应用到输入张量上:(此处漏了一张图,后期补一下)

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.LogSigmoid()

>>> input = torch.randn(2)

>>> output = m(input)

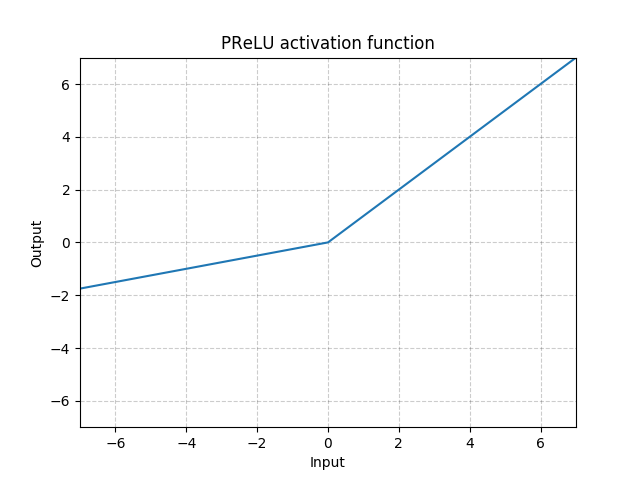

PReLU

class torch.nn.PReLU(num_parameters=1, init=0.25)

将下面的元素级函数应用到输入张量上:

或

此处  是一个可学习的参数。 如果在调用

是一个可学习的参数。 如果在调用nn.PReLU()函数的时候没有传入参数,那么会默认在所有的输入通道上应用同一个 参数。 如果以

参数。 如果以nn.PReLU(nChannels)这种方式调用, 每个输入通道都会有一个单独的 参数。

参数。

Note

想要学一个好的 参数,最好不要用weight decay。

参数,最好不要用weight decay。

Note

通道维度是输入张量的第二个维度。当输入张量的维度数 < 2的时候,那此输入张量就没有通道维度,而此时其通道数被认为是1。

Parameters:

- num_parameters (int) – 要进行训练学习的

参数的数量。尽管此函数的输入是一个整形,但此函数要求输入的整形只能为两个值,1或者输入张量的通道数。默认:1

参数的数量。尽管此函数的输入是一个整形,但此函数要求输入的整形只能为两个值,1或者输入张量的通道数。默认:1 - init (float) –

的初始值,默认: 0.25

的初始值,默认: 0.25

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

| Variables: | weight (Tensor) – 大小为num_parameters的可学习参数。The attr:dtype is default to(这句话有点问题, to后面漏掉了) |

|---|---|

示例:

>>> m = nn.PReLU()

>>> input = torch.randn(2)

>>> output = m(input)

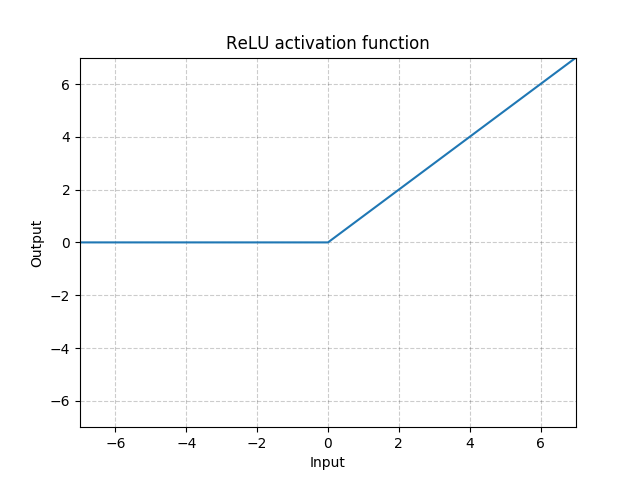

ReLU

class torch.nn.ReLU(inplace=False)

将元素级线性整流函数函数应用到输入张量上 Applies the rectified linear unit function element-wise

Applies the rectified linear unit function element-wise

| Parameters: | inplace – 是否进行原位操作。 默认: False |

|---|---|

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.ReLU()

>>> input = torch.randn(2)

>>> output = m(input)

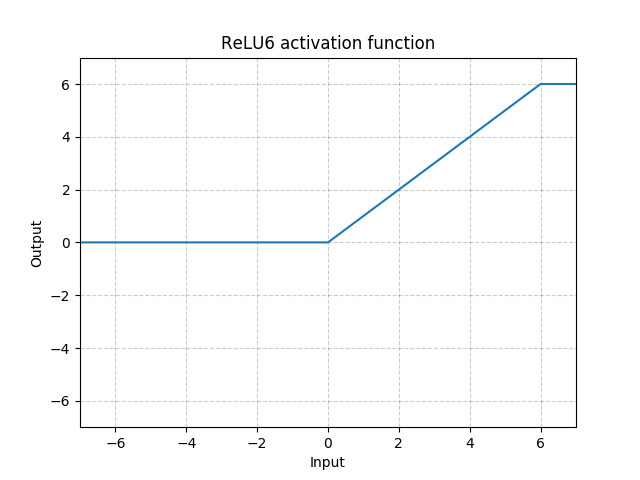

ReLU6

class torch.nn.ReLU6(inplace=False)

将下面的元素级函数应用到输入张量上:

| Parameters: | inplace – 是否进行原位操作。 默认: False |

|---|---|

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.ReLU6()

>>> input = torch.randn(2)

>>> output = m(input)

RReLU

class torch.nn.RReLU(lower=0.125, upper=0.3333333333333333, inplace=False)

将元素级随机线性整流函数函数应用到输入张量上,详情此文章:

Empirical Evaluation of Rectified Activations in Convolutional Network.

此函数定义如下:

其中  是从此均匀分布中采样而来:

是从此均匀分布中采样而来: .

.

Parameters:

- lower – 均匀分布下限, 默认:

- upper – 均匀分布上限,默认:

- inplace – 是否进行原位操作。 默认:

False

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.RReLU(0.1, 0.3)

>>> input = torch.randn(2)

>>> output = m(input)

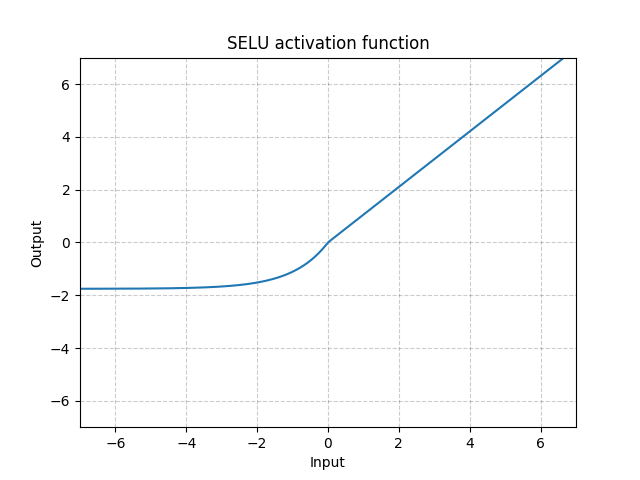

SELU

class torch.nn.SELU(inplace=False)

将下面的元素级函数应用到输入张量上:

其中  而且

而且  .

.

More details can be found in the paper Self-Normalizing Neural Networks .

| Parameters: | inplace (bool, optional) – 是否进行原位操作。 默认: False |

|---|---|

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.SELU()

>>> input = torch.randn(2)

>>> output = m(input)

CELU

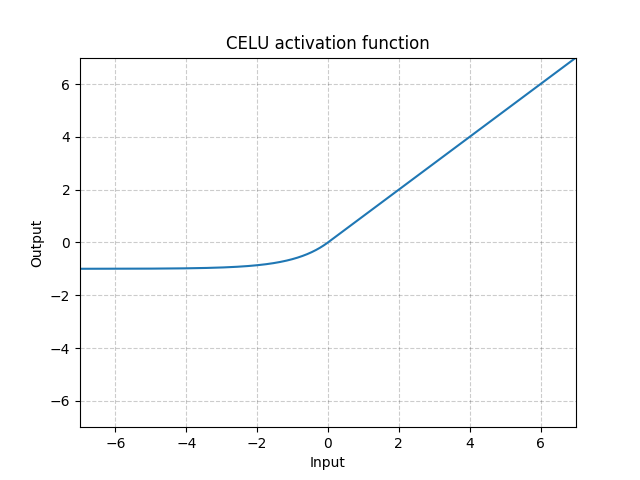

class torch.nn.CELU(alpha=1.0, inplace=False)

将下面的元素级函数应用到输入张量上:

更多细节请见paper: Continuously Differentiable Exponential Linear Units .

Parameters:

- alpha – CELU操作中的

值,默认: 1.0

值,默认: 1.0 - inplace – 是否进行原位操作。 默认:

False

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.CELU()

>>> input = torch.randn(2)

>>> output = m(input)

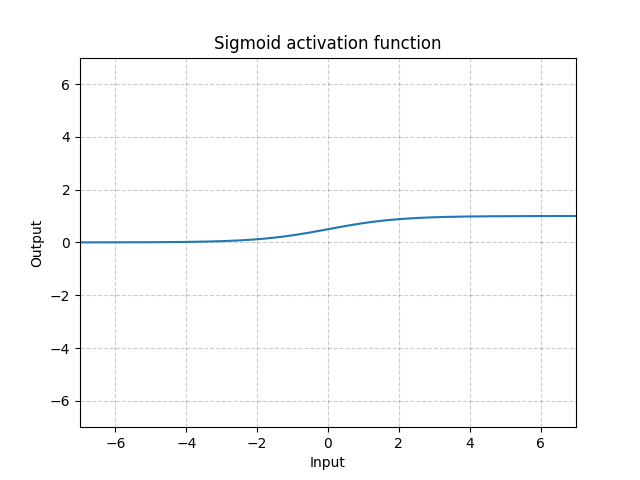

Sigmoid

class torch.nn.Sigmoid

将下面的元素级函数应用到输入张量上:

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.Sigmoid()

>>> input = torch.randn(2)

>>> output = m(input)

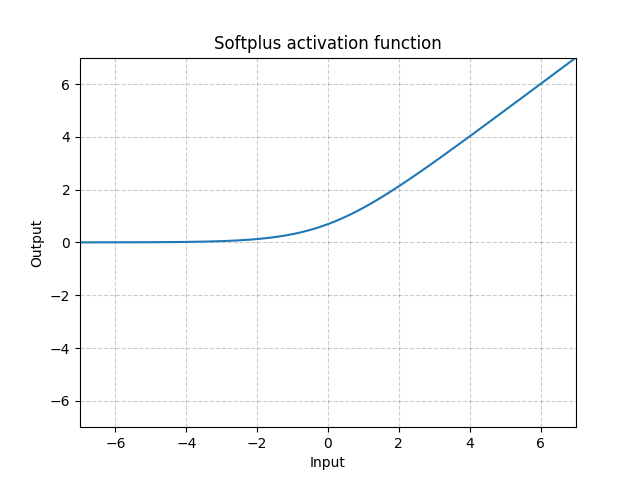

Softplus

class torch.nn.Softplus(beta=1, threshold=20)

将下面的元素级函数应用到输入张量上:

SoftPlus是一个平滑的类ReLU函数,可以用于将输出结果规范到全正。

为了数值稳定性,在实现此函数的过程中,当 x 超过某个特定值之后,我们会将此函数转化为一个线性函数。

Parameters:

- beta – Softplus操作的

值,默认: 1

值,默认: 1 - threshold – 将函数转化为线性函数的阈值, 默认: 20

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.Softplus()

>>> input = torch.randn(2)

>>> output = m(input)

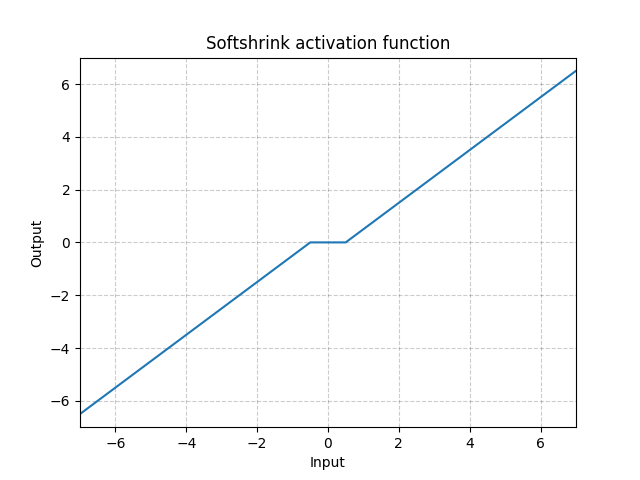

Softshrink

class torch.nn.Softshrink(lambd=0.5)

将下面的元素级软收缩函数应用到输入张量上:

| Parameters: | lambd – 软收缩运算的 值,默认: 0.5 值,默认: 0.5 |

|---|---|

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.Softshrink()

>>> input = torch.randn(2)

>>> output = m(input)

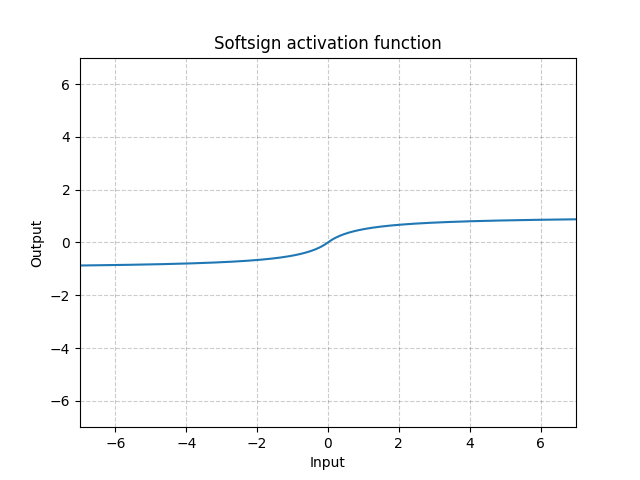

Softsign

class torch.nn.Softsign

将下面的元素级函数应用到输入张量上:

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.Softsign()

>>> input = torch.randn(2)

>>> output = m(input)

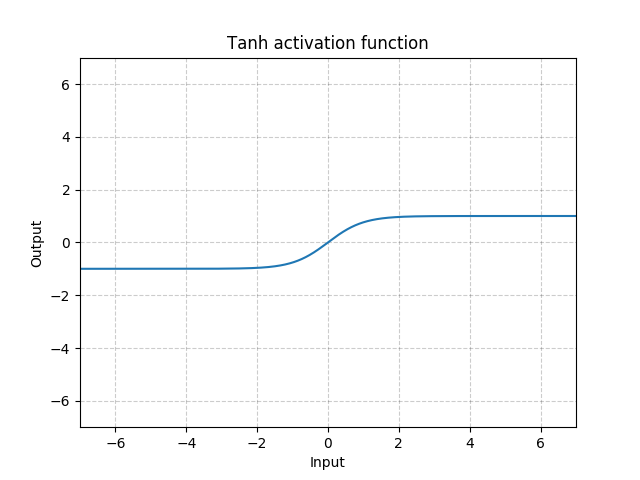

Tanh

class torch.nn.Tanh

将下面的元素级函数应用到输入张量上:

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.Tanh()

>>> input = torch.randn(2)

>>> output = m(input)

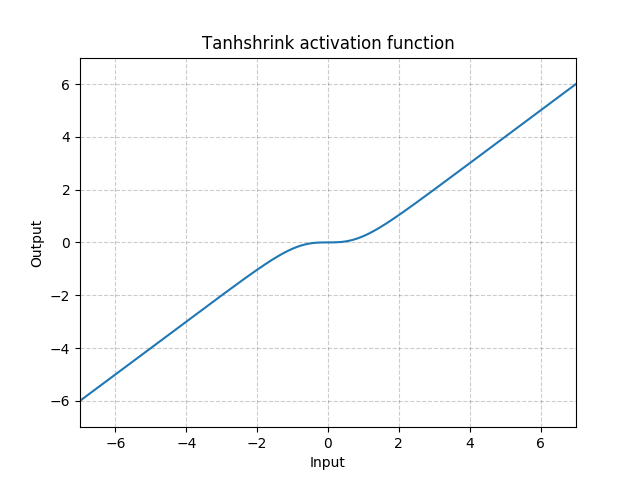

Tanhshrink

class torch.nn.Tanhshrink

将下面的元素级函数应用到输入张量上:

Shape:

- 输入:

其中

其中 *代表支持任意大小的附加维度 - 输出:

, 与输入向量保持一样的形状大小

, 与输入向量保持一样的形状大小

示例:

>>> m = nn.Tanhshrink()

>>> input = torch.randn(2)

>>> output = m(input)

Threshold

class torch.nn.Threshold(threshold, value, inplace=False)

使用阈值过滤输入张量的每个元素

阈值被定义如下:

Parameters:

- threshold – 阈值大小